10 AI Security Templates Security Teams Actually Need

AI security templates standardize governance, risk management, and operational controls across the AI lifecycle, but they must be combined with testing, monitoring, and technical safeguards to protect against risks like prompt injection, data leakage, and model misuse.

Key Takeaways

- AI security templates provide a structured, repeatable way to standardize governance, risk management, and safe deployment practices across AI systems, helping security teams scale protections as adoption grows.

- Because AI introduces risks such as prompt injection, data leakage, and model misuse, organizations must combine customized AI security templates with technical controls, training, and monitoring to effectively mitigate real-world threats.

In This Article

AI moved into production systems faster than security teams could develop effective governance. Many organizations lack established processes, controls, or policies to secure AI. Others rely on improvised approaches that worked during experimentation but fail once AI becomes part of daily operations.

AI systems create new security exposures that require clear structure and repeatable safeguards. AI security templates help teams define expectations, document controls, and guide secure development and deployment.

In this guide, you will learn how AI security templates strengthen protection and see 10 free and low-cost templates you can put into practice immediately.

What Are AI Security Templates, and How Do They Work?

AI security templates are reusable documents that standardize security procedures for every phase of an AI system’s lifecycle. They can specify everything from initial policy language to testing procedures, risk assessments, and operational controls.

Rather than authoring security guidance from scratch each time a team plans to deploy a model or use a new AI service, templates ensure that every deployment follows a consistent, defensible process.

Templates exist for technical risks, such as model security testing procedures (how do we validate that models are protected against prompt injection or don’t produce unsafe outputs?), as well as human risks, such as governance (what are the access controls, approval workflows, and responsible parties for a given model?).

By defining a common approach for teams to reference when building, deploying, and operating AI, everyone understands their roles and responsibilities for keeping AI secure.

Templates are only useful if they map to the systems and risks your teams use every day. Every company uses different models, integrates with different data sources, and enables different business outcomes.

Security templates should be customized to ensure they align with your system and environment. Customization includes defining responsible parties (owners), mapping where sensitive data resides in your system, and providing proper documentation around how your models integrate with third-party tools or downstream systems.

When properly defined, templates become living documents that engineers refer to during deployment approvals, security teams use during risk assessments, and compliance teams rely on to ensure adequate controls are validated and audit evidence is captured. This allows teams to scale AI adoption while maintaining security guardrails.

10 Best AI Security Templates

Structure and consistency make a big difference in AI risk management. Create your own version of these AI security templates to secure your AI system at scale.

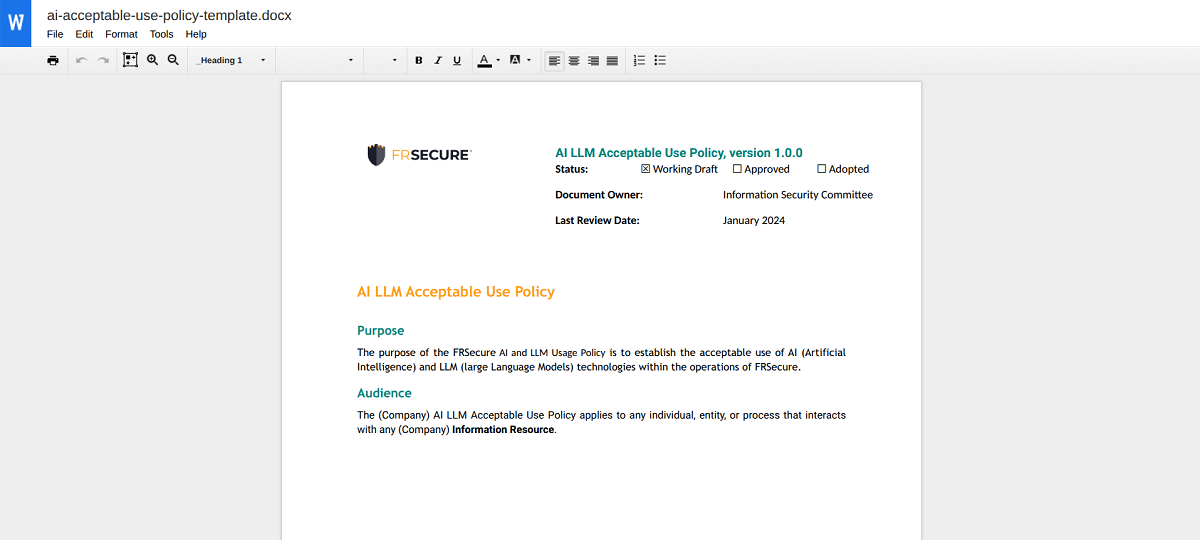

1. AI Acceptable Use Policy

Acceptable use policies tell employees and contractors what they can and can’t use AI for, as well as which AI models are acceptable. Since many organizations use AI in some form, acceptable use policies ensure your team is on the same page and understands what’s expected of them. It won’t prevent all security issues, but acceptable use lays the foundation for user accountability.

Notable features:

- Word Document setup that’s ready for plug-and-play

- Clear guidelines for personalization and branding

- Enforcement guidelines section

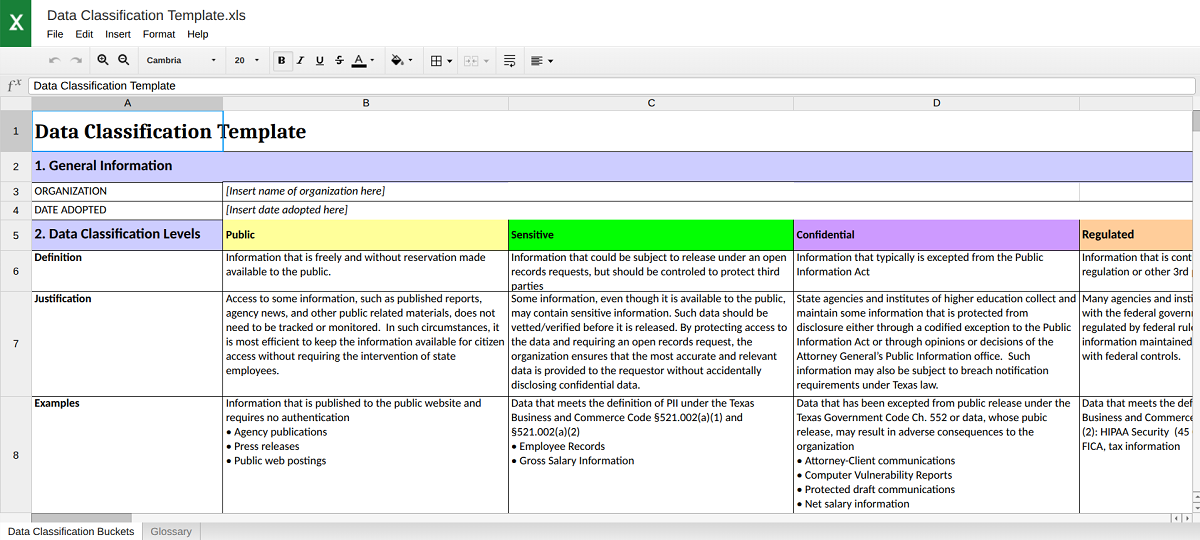

2. Data Classification Template

The State of Texas provides this free data classification template, which includes helpful frameworks for classification levels, controls, roles, and more. Since there are big differences between public and private data, this AI security template helps you take a customized approach to different types of information.

Notable features:

- Includes data classification levels with justifications for those levels

- Customize controls for data with different sensitivities

- Document audit controls

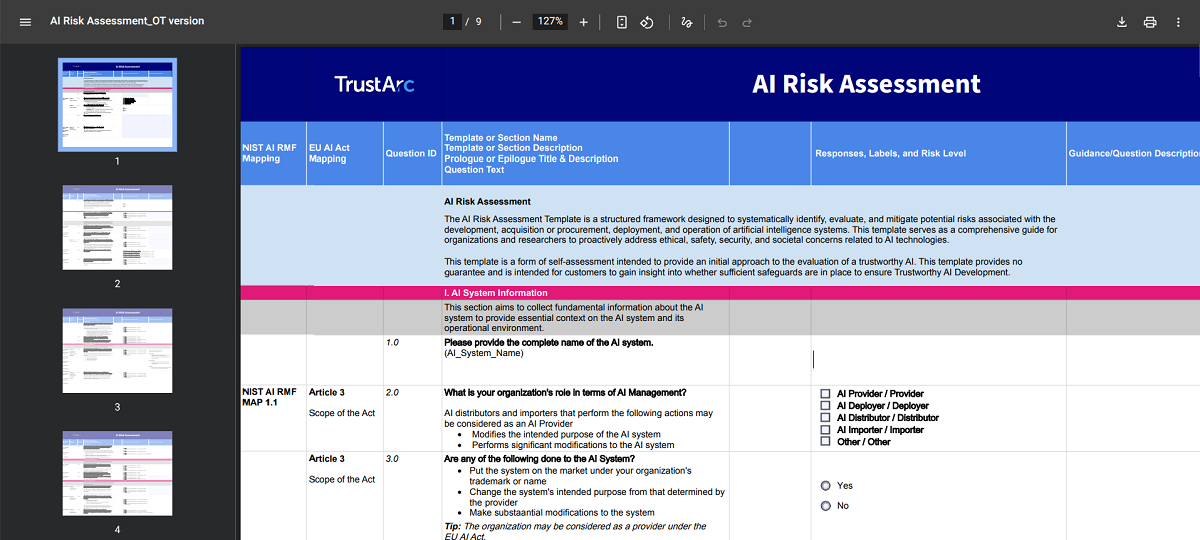

3. Risk Assessment Framework

Risk assessments are a must-have for any digital asset, and AI is no exception, especially when aligned with a formal AI risk management framework. Having an AI-specific framework like this free template will help you address unique threats to AI, from prompt injection attacks to hallucinations.

Notable features:

- Aligns with NIST AI RMF and EU AI Act

- Considers human involvement in risk, not just technical controls

- Thorough assessment of governance, reliability, and more

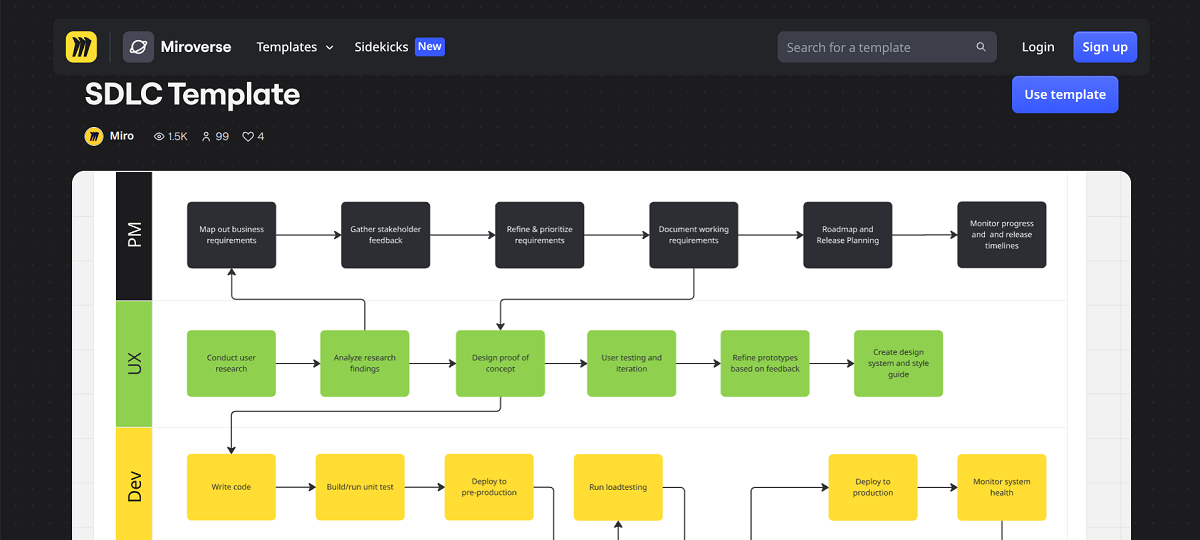

4. Secure AI Development Life Cycle Template

You need to design for security at every stage of the AI lifecycle. Creating a visual Secure Development Life Cycle (SDLC) helps your team understand these processes and how they work together to improve both quality and safety.

Notable features:

- Highly visual

- Drag-and-drop interface

- Color-coded and customizable

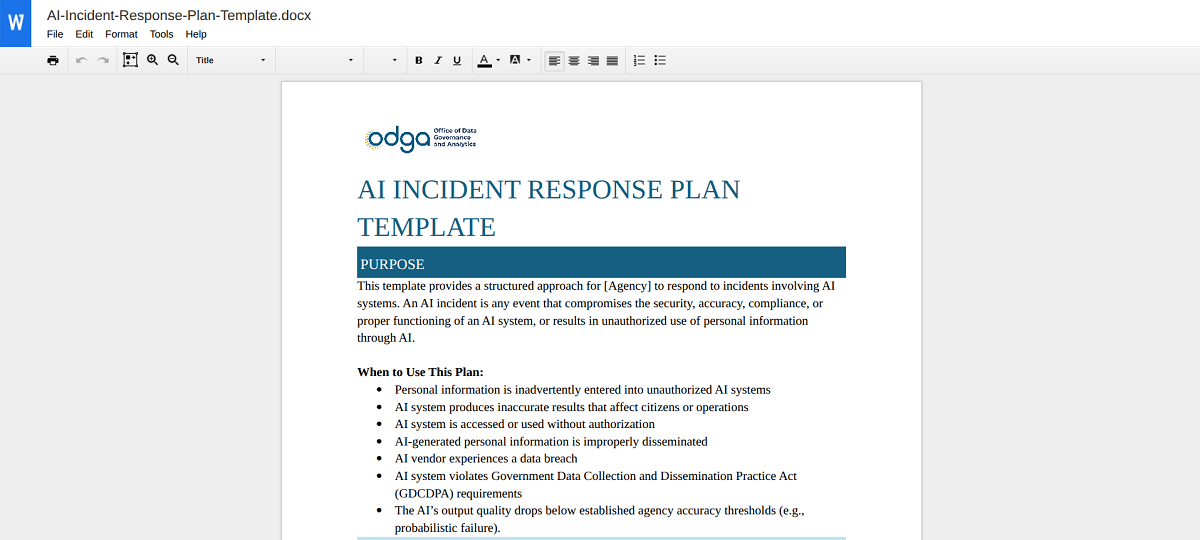

5. AI Incident Response Plan Template

Virginia’s Office of Data Governance and Analytics provides this free AI security template for incident response. There’s no such thing as a perfectly secure AI, and it’s critical to have a readymade incident response plan to follow when (not if) an incident happens.

Notable features:

- Lists roles and responsibilities for different stakeholders

- Outlines a timeline for immediate response based on severity level

- Provides a helpful list of agencies that need to be contacted in different situations

6. AI Vendor Questionnaire Template

If your organization uses AI, your vendors likely do as well. However, their use of AI has a direct impact on your security. Use this simple AI security checklist to thoroughly vet vendors’ use of AI and ensure they take proper precautions.

Notable features:

- Vendor-ready format

- Includes thorough questions on regulations, hosting, and more

- Standardized format makes it easier to evaluate multiple vendors based on their responses

7. AI Data Retention Policy Template

AI systems require substantial data to work properly. If you don’t already have one, creating a data retention policy clarifies how long you can store data and outlines deletion guidelines.

Notable features:

- Differentiates guidelines based on data category

- Includes a section for the recipient to acknowledge receipt

- Details responsibilities for various stakeholders

8. Ethical Guidelines for AI Template

Ethics is a significant concern in AI use. This free AI security template from the Responsible AI Institute provides helpful guidelines not just for complying with popular standards, but also for ethical development and management.

Notable features:

- Aligns with ISO/IEC 42001 and the NIST AI Risk Management Framework

- Includes data practices for transparency and privacy

- Offers project management guidance to ensure ongoing ethical use

9. Employee AI Training Template

Human error is a common risk vector for all digital systems, including AI. Use this AI security template from Articulate to build a simple, user-friendly guide to AI best practices for your team.

Notable features:

- Drag-and-drop setup

- Intuitive design and mobile-first experience

- Free 30-day trial

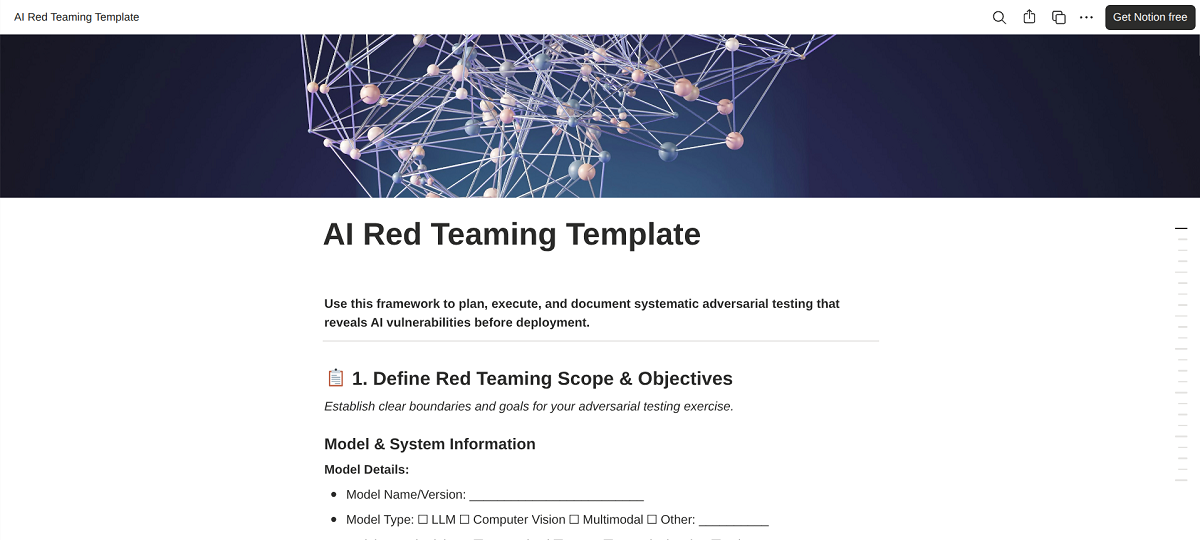

10. Red Teaming Execution Policy Template

Red teaming exercises simulate real-world attacks against AI systems. Still, these exercises require planning. Use this AI red teaming template to clearly define the scope of the test, list out-of-scope areas, and specify attack scenarios.

Notable features:

- Simple and reusable checklist format

- Defines team roles and responsibilities, plus time commitments

- Provides step-by-step red teaming instructions for consistent testing

Turn AI Security Templates Into Real Protection with Mindgard

AI security templates define what teams should do. Mindgard shows whether those controls actually hold up under real-world conditions.

Mindgard’s Offensive Security platform allows security teams to test AI systems from an attacker’s perspective. Mindgard simulates attacks like prompt injection, data exfiltration, and model manipulation in a safe environment to validate your defenses.

These tests expose weaknesses that AI security templates can’t detect. Instead of assuming policies work, teams gain direct evidence of how models behave under pressure.

Most organizations do not fully understand where AI exists across their environment. Mindgard’s AI Security Risk Discovery & Assessment identifies AI systems, connected data sources, and integration points.

This gives teams the visibility required to apply the right templates and prioritize risk. Templates become more effective when they align with real usage rather than assumptions.

Mindgard’s Automated AI Red Teaming helps teams continuously validate security controls as models evolve. New model versions, updated prompts, or added integrations can introduce risk without warning. Automated testing ensures security templates remain enforced as systems change over time.

Mindgard’s AI Artifact Scanning analyzes runtime artifacts such as prompts, model inputs and outputs, tool interactions, and system instructions to identify unsafe behavior and data exposure risks. This reveals how models actually operate in production rather than relying on assumptions from development.

AI security templates define governance, expectations, and responsibilities. Request a demo to learn how Mindgard validates the effectiveness of those safeguards against real threats.

Frequently Asked Questions

Why do organizations need AI-specific security templates?

Traditional security controls don’t address AI-specific risks like prompt injection, model manipulation, training data leakage, or model output misuse. AI security templates help you account for these threat vectors while still following required governance frameworks.

How are AI security templates different from general cybersecurity templates?

General cybersecurity templates focus on infrastructure and applications. You’ll likely still need them even with AI templates.

AI security templates are an extension of your cybersecurity templates and cover additional, AI-specific issues like model behavior or vendor integrations. Both templates are necessary, although AI-specific ones may need more frequent updates.

Do AI security templates replace the need for technical controls?

No. Templates provide structure and consistency, but you must pair them with enforceable controls and monitoring. Think of templates as a way to operationalize AI security, not as a replacement or an automated solution for it.