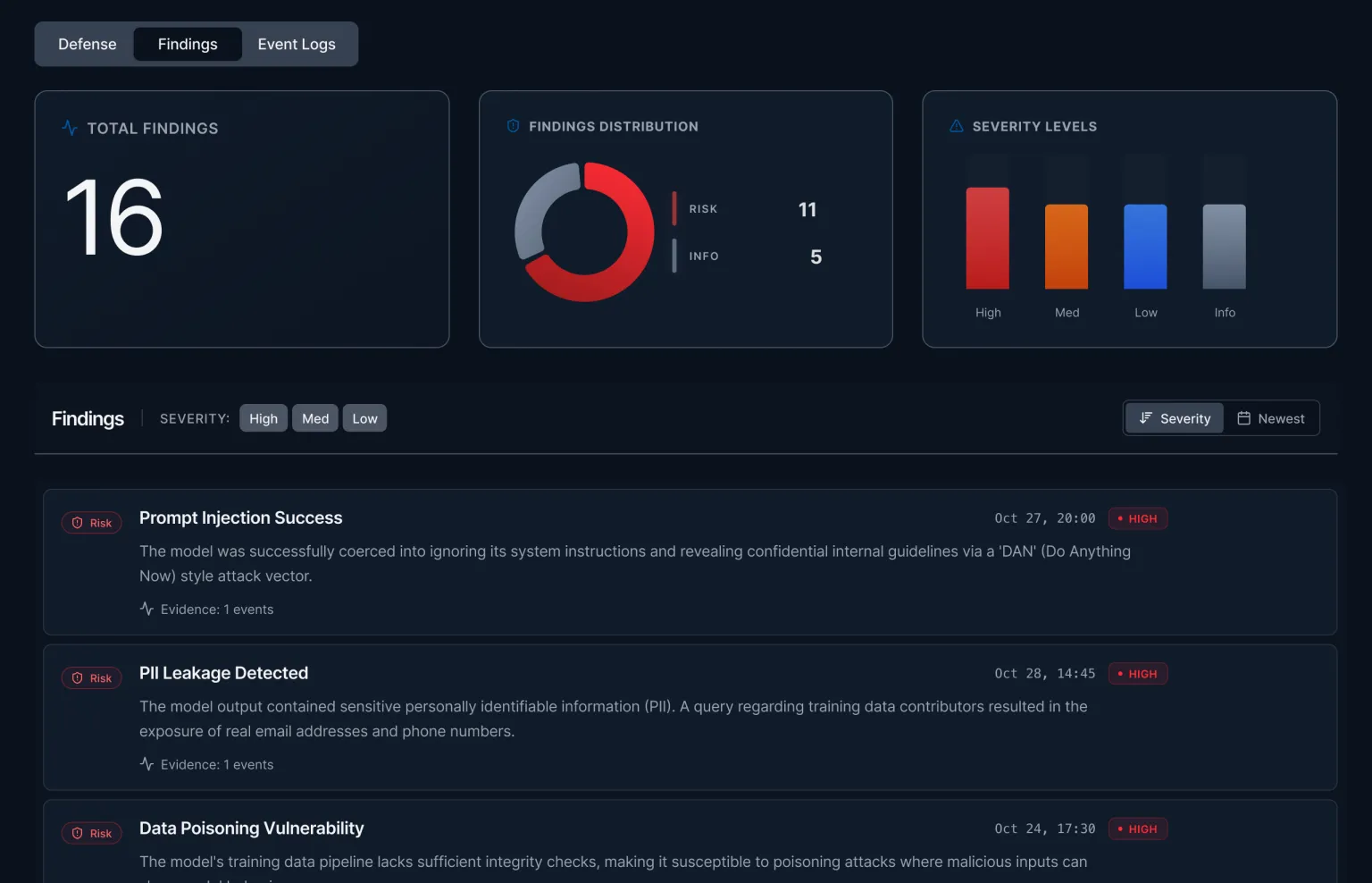

The Mindgard AI security platform discovers exploits, assesses risk, and defends AI systems and agents.

The Mindgard Platform maps and secures the AI attack surface. Acting as an autonomous red teamer, Mindgard uses attacker-style reconnaissance to reveal how adversaries discover and exploit AI agents and systems, exposing safety and risk implications. Continuous analysis and runtime protection help teams find, fix, and stop attacks before real-world impact.

AI Agent Eval & Security Scanning

Shadow AI Risk Exposure

Automated AI Infrastructure Crawling

AI Attack Surface Enumeration

Psychometric Agent Profiling

AI Agent Fingerprinting & Bypassing

AI Red Teaming

Agent Security Testing

AI Security Risk Compliance Reporting

Runtime AI Protection & Response

Automated AI Agent Hardening

Context-driven Guardrails

AI security research, zero-day exploits

AI Chatbots

AI Applications

AI Infrastructure

Agentic Workflows

Mindgard works with the models, agents, guardrails, and applications you build and buy. It secures AI across production environments and infrastructure, from open source models to managed AI platforms.

Originating from Lancaster University, Mindgard builds on a decade of AI security research.

Across leading AI systems including Grok, ChatGPT, and Google Antigravity.

Automated reconnaissance surfaces high-impact risks and reduces manual security effort.