Build Safer AI, Faster: 10 AI Red Teaming Templates That Work

AI red teaming templates provide structured prompts, protocols, and reporting frameworks that make adversarial testing more consistent, efficient, and effective at uncovering real-world vulnerabilities in AI systems before attackers exploit them.

Key Takeaways

- AI red teaming templates make adversarial testing structured and repeatable by providing predefined prompts, protocols, and documentation frameworks that help security teams consistently identify vulnerabilities in AI systems.

- Using red teaming templates improves testing efficiency and safety by establishing clear scope, rules of engagement, and reporting processes, allowing teams to uncover risks faster without disrupting production systems.

In This Article

AI red teaming tests large language models (LLMs) by putting cybersecurity experts in the role of attackers. The red team attempts prompt injections, data extraction techniques, and other adversarial attack techniques to probe weaknesses in your AI systems. This proactive testing approach helps uncover weaknesses before attackers exploit them using the same adversarial attack methods seen in the real world.

Red teaming requires structure to produce reliable results and align with established offensive security fundamentals that guide adversarial testing. From rules of engagement to protocols and operator logs, your team needs the right strategies to test effectively as part of a structured offensive security program.

AI red teaming templates ensure consistency across all red teaming exercises, protecting off-limits systems from interference while giving your team clear guardrails.

This guide explains how AI red teaming templates work and why they improve testing outcomes. It also includes ten free and low-cost templates to help your team run more effective adversarial testing.

What Are AI Red Teaming Templates, and How Do They Work?

Security teams use ready-made AI red teaming templates to streamline their attack simulations. Instead of starting from scratch each time, your team uses predefined prompts, scenarios, and evaluation criteria aligned with red team operations phases to document how your model behaves under stress.

At a practical level, red teaming templates operationalize adversarial testing. Without templates, red teaming can become inconsistent and dependent on individual testers.

In some cases, testers can inadvertently interfere with other systems, which is why structure matters. Embracing templates makes red teaming an organized, repeatable process that improves consistency and makes it easier to mesure red teaming effectiveness across testing cycles.

10 Best AI Red Teaming Templates

Red teaming doesn’t need to happen from scratch. Try these templates to consistently document exploits, findings, and more as part of a structured AI vulnerability assessment process.

1. AI Red Teaming Implementation Checklist

PurpleSec’s free checklist establishes clear guardrails and scope for AI red teaming. From outlining your testing procedures to documenting success metrics, this checklist can guide red teams during every exercise.

Notable features:

- Available in Word and PDF format

- Includes OWASP Top 10 attack scenarios

- Addresses multiple testing scenarios, from jailbreaking to bias elicitation and prompt injections

2. CleverX AI Red Teaming Template

Plan and execute your next red teaming exercise with this free Notion template. It documents details about your current LLM model and includes fast, checklist-style sections to streamline goal-setting and scoping.

Notable features:

- Mobile-friendly template available in Notion

- Addresses roles and diversity considerations

- Includes attack vector plans for bias, hallucinations, context exploitation, and more

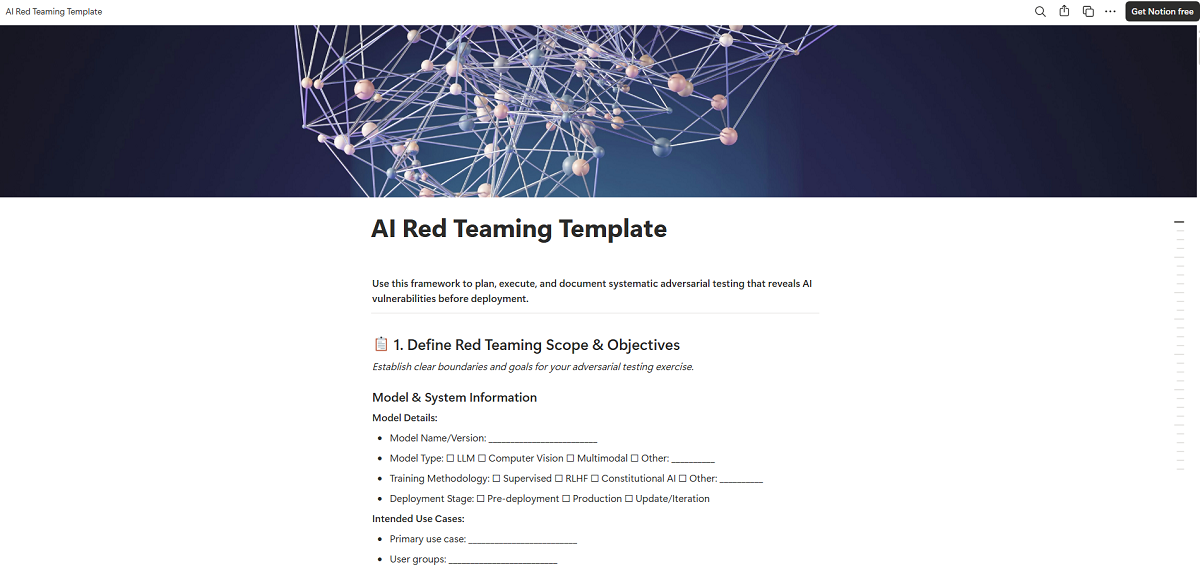

3. Rules of Engagement Template

Rules of Engagement (ROE) are a must-have for any AI red teaming exercise. This document outlines what the red team can and can’t do during an exercise and designates certain systems or infrastructure as off-limits.

Notable features:

- Provides sections for authorized targets and restrictions

- Includes customer-facing language for businesses that provide red teaming as a service

- Offers a detailed list of contacts for issue resolution

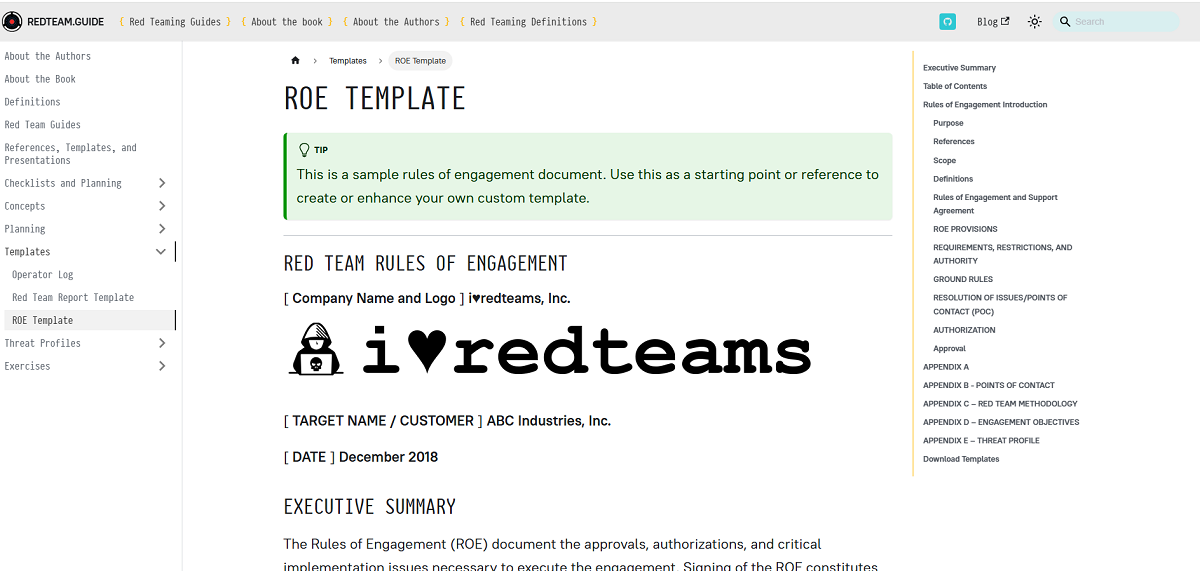

4. Red Teaming GitHub Repo Template

Need a more technical resource? The Azure OpenAI “aoai-redteam-copyright-template” repo includes a readymade evaluation flow for generative AI apps. The template covers prerequisites, metaprompt/system message template, prompt sets, and result-logging patterns.

Notable features:

- Designed specifically for copyright protection

- Requires Python 3.10 or later, Azure OpenAI, and Promptflow

- Includes a visual guide for implementation

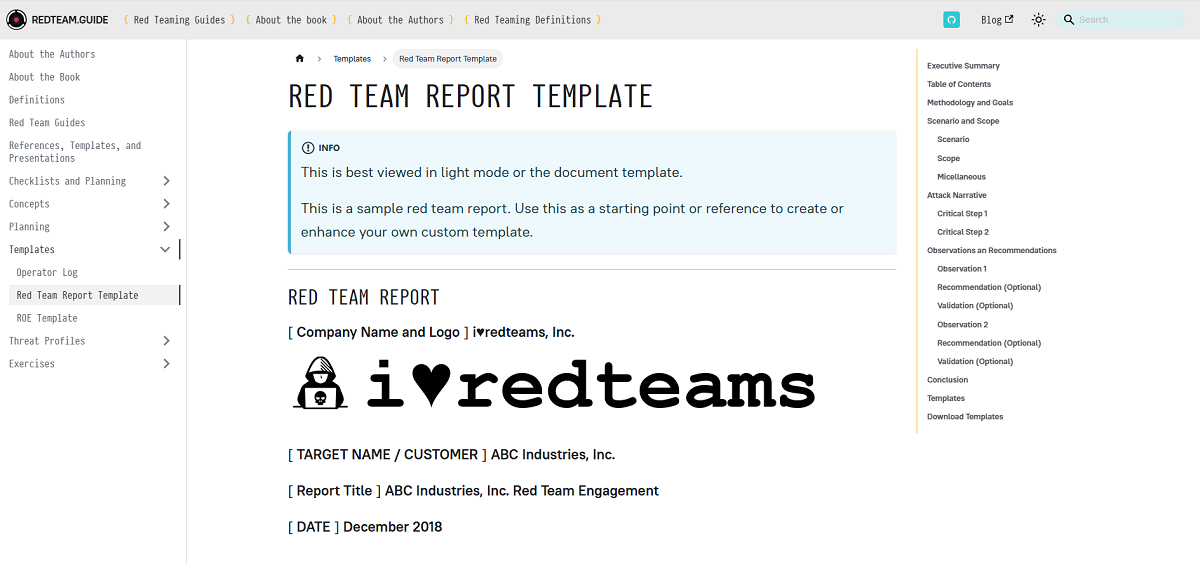

5. Red Teaming Report Template

This AI red teaming template is helpful for sharing the results of an exercise with multiple stakeholders. It’s simple to fill out and understand, making it an ideal starting point for reports tailored to non-technical teams.

Notable features:

- Makes goals and methods incredibly clear

- Includes placeholders for images and diagrams to improve understanding

- Focuses on observations, validations, and recommendations

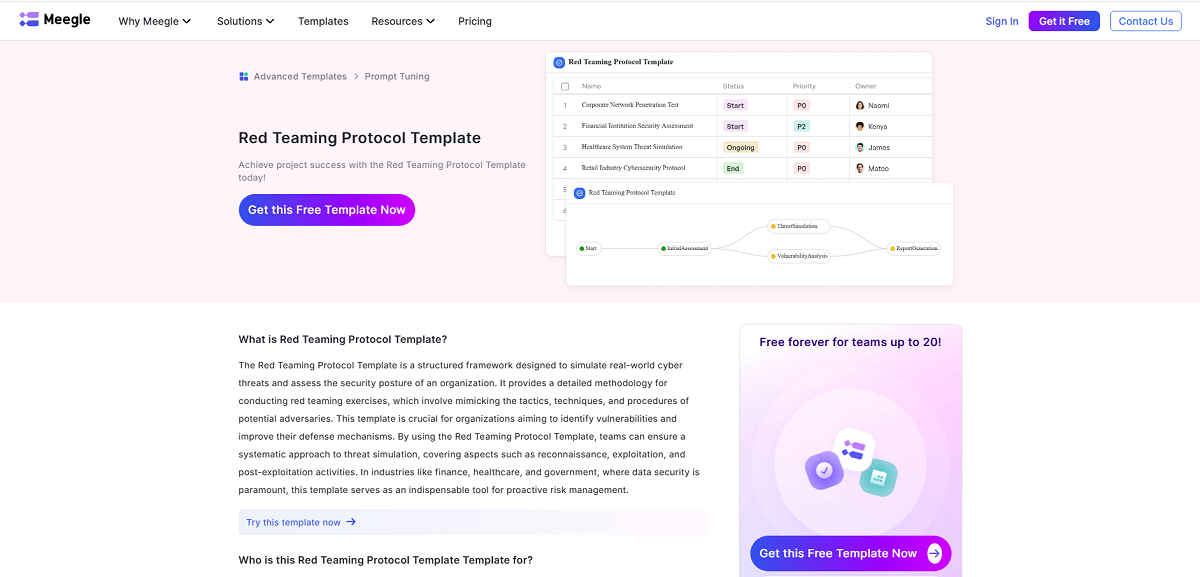

6. Red Teaming Protocol Template

Every red team exercise needs strong project management to stay on track. This free template from Meegle requires an account to download, but it can be a helpful tool for everything from logging methodology to assigning tasks to various red team members.

Notable features:

- Provides a readymade, visual project management tool

- Visualize protocols and assignees for each stage of red teaming

- Covers recon, exploitation, and post-exploit activities

7. Red Team Exercise PowerPoint Templates

Do you need to present your findings to a larger team? Copy these red team PowerPoint templates to ensure you cover all areas of the exercise—and save time on design.

Notable features:

- Simple, professional slides with thorough layouts

- Choose from several templates for ethical hacking, project status, pentesting, and more

- Includes professional visuals to improve understanding

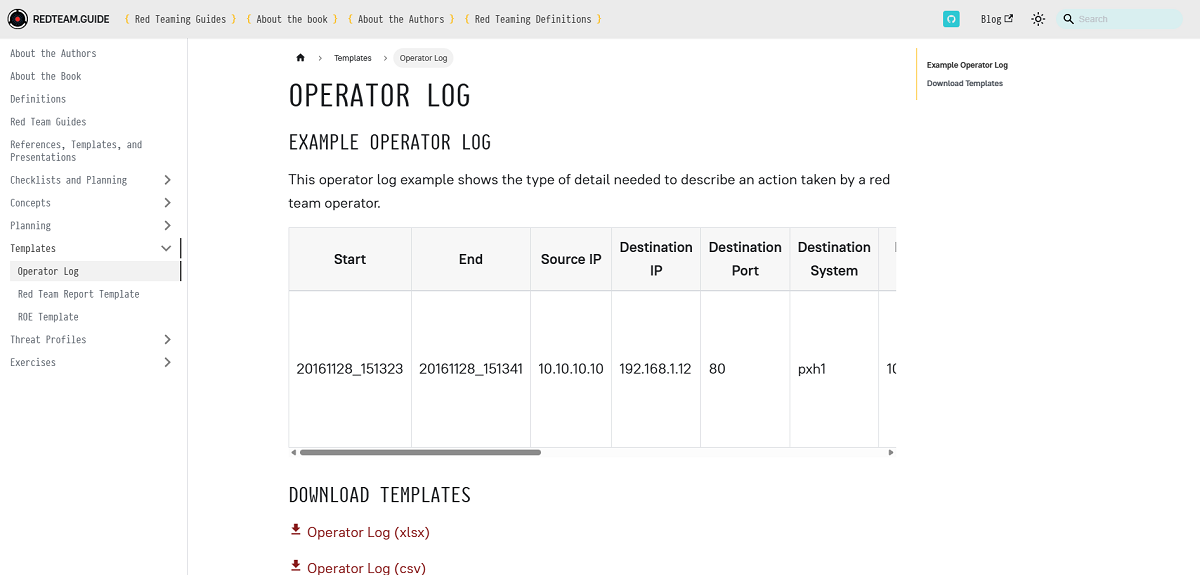

8. Operator Log Templates

AI red team members need to log their actions during an exercise. This free log template provides your team with a structured space to document start and end times, IPs, ports, systems, and more to simplify reporting.

Notable features:

- Downloadable as Excel or CSV

- Includes thorough columns for data collection

- Fully customizable to your team’s needs

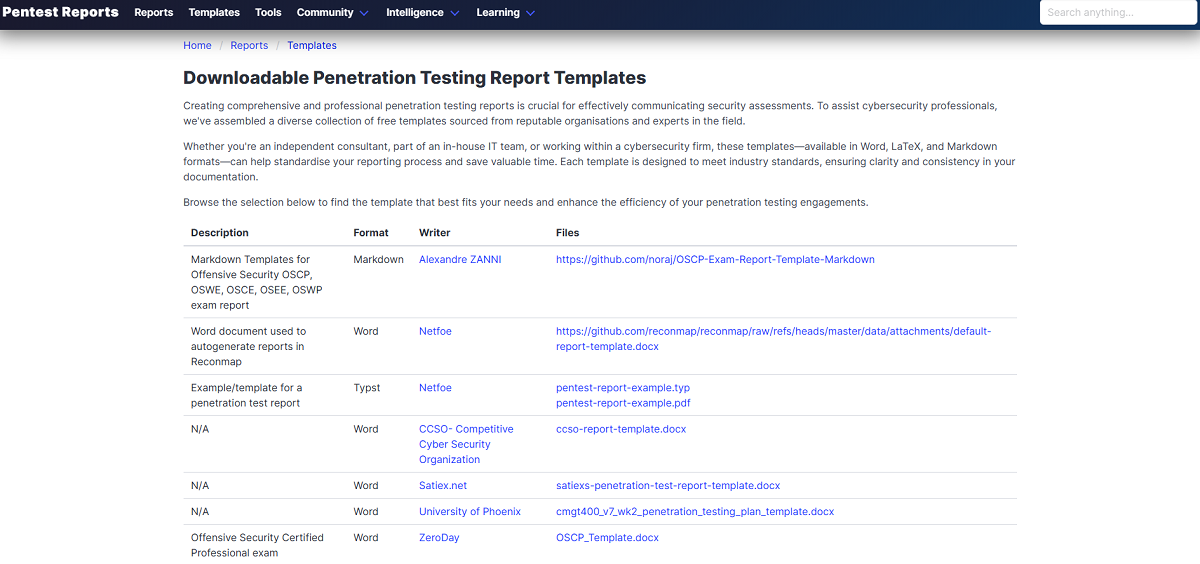

9. Penetration Testing Report Templates

Penetration testing and AI red teaming are different security testing approaches, but you can customize these pentesting templates to support red teaming exercises. This resource includes several GitHub repositories and report templates to cover every stage of adversarial testing.

Notable features:

- Includes GitHub resources, documents, and PDFs

- Offers pentesting reports and examples

- Tools for autogenerating reports with Reconmap

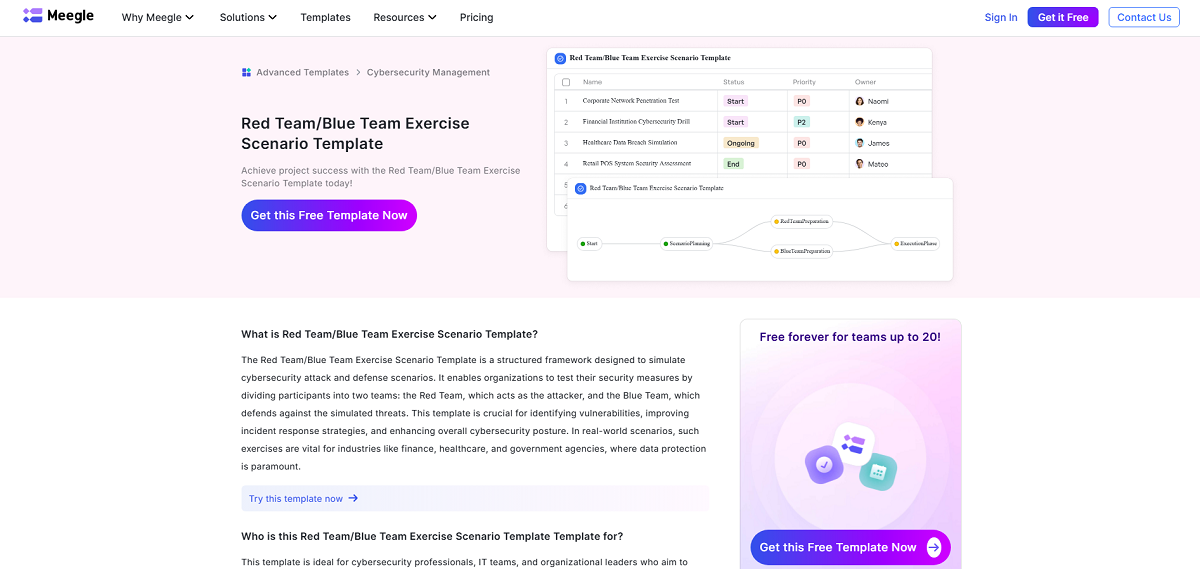

10. Red Team / Blue Team Exercise Scenario Template

Some organizations also have a blue team involved in testing, which helps defend the AI against attacks. It’s essential to align the red and blue teams during a test, and this free template does just that.

Notable features:

- Easily defines blue and red team members in a visual tool

- Drag-and-drop process planning for red and blue team exercises

- Manage schedules for both teams in a single view

Turning Red Teaming Templates Into Real Security Validation with Mindgard

AI red teaming templates give your team structure. They define the scope, document findings, and make testing repeatable. But templates alone can’t prove that your AI systems are safe.

Real attackers don’t follow templates. They exploit unexpected model behavior, hidden data exposure paths, and unsafe tool interactions.

Mindgard’s Offensive Security solution turns those templates into active security validation. Instead of relying on manual testing alone, Mindgard continuously tests your AI systems against real risks, such as prompt injection, sensitive information exposure, unsafe tool execution, and model manipulation. Teams see how their systems behave in real-world conditions, instead of how they should behave on paper.

Mindgard covers every stage of the AI lifecycle:

- Mindgard’s AI Security Risk Discovery & Assessment discovers where AI exists across your environment and identifies high-risk AI systems that require immediate testing, ensuring that your red teaming efforts focus on the areas that matter most.

- Mindgard’s Automated AI Red Teaming automates the adversarial testing process by continuously launching real-world attack simulations against your models. This enables your security team to identify vulnerabilities faster and know whether your controls are effective.

- Mindgard’s AI Artifact Scanning continuously tests models in live environments to identify vulnerabilities that only appear at run time. It detects prompt injection exposure, unsafe model behavior, and configuration risks as they occur. Security teams gain real-time visibility into active threats, enabling them to remediate weaknesses before attackers exploit them.

Together, these capabilities move red teaming beyond documentation. Templates provide the structure, but Mindgard gives security teams clear visibility into AI risks. Request a demo to discover how Mindgard can give your team confidence that your systems can withstand real-world adversarial pressure.

Frequently Asked Questions

Why should we use templates instead of designing red team tests from scratch?

Starting from scratch gives you the most control, but many businesses don’t have time to recreate tests or reports from scratch. Plus, it can lead to inconsistent coverage and undocumented gaps. Templates provide a structured foundation, enabling your team to focus on uncovering real vulnerabilities rather than reinventing the testing process each time.

Can AI red teaming templates be customized?

Absolutely, and you should customize them. Templates are just a structure to follow; they aren’t scripts. Security teams should adapt AI red teaming templates to align with their infrastructure and risk profile.

How often should AI red teaming templates be used?

Red teaming occurs on an ongoing basis, so you may need to use your templates weekly, if not daily. Templates make it easier to test during key moments such as pre-deployment reviews, major model updates, new feature releases, or policy changes.