Mindgard Recognized as UK's Most Innovative Cyber SME 2024 at Infosecurity Europe

Dr. Peter Garraghan

As AI gets infused in every software application that powers our personal and corporate lives, ensuring their safety and security is more crucial than ever. One effective way to achieve this is through AI red teaming - a process that simulates adversarial attacks on AI systems to identify potential vulnerabilities.

While security researchers and builders employ various red teaming techniques, the lack of standardised practices creates confusion and inconsistency. Different approaches are used to assess similar threat models, making it difficult to compare the relative safety of different AI systems objectively, and especially challenging to track these threats and create a remediation strategy.

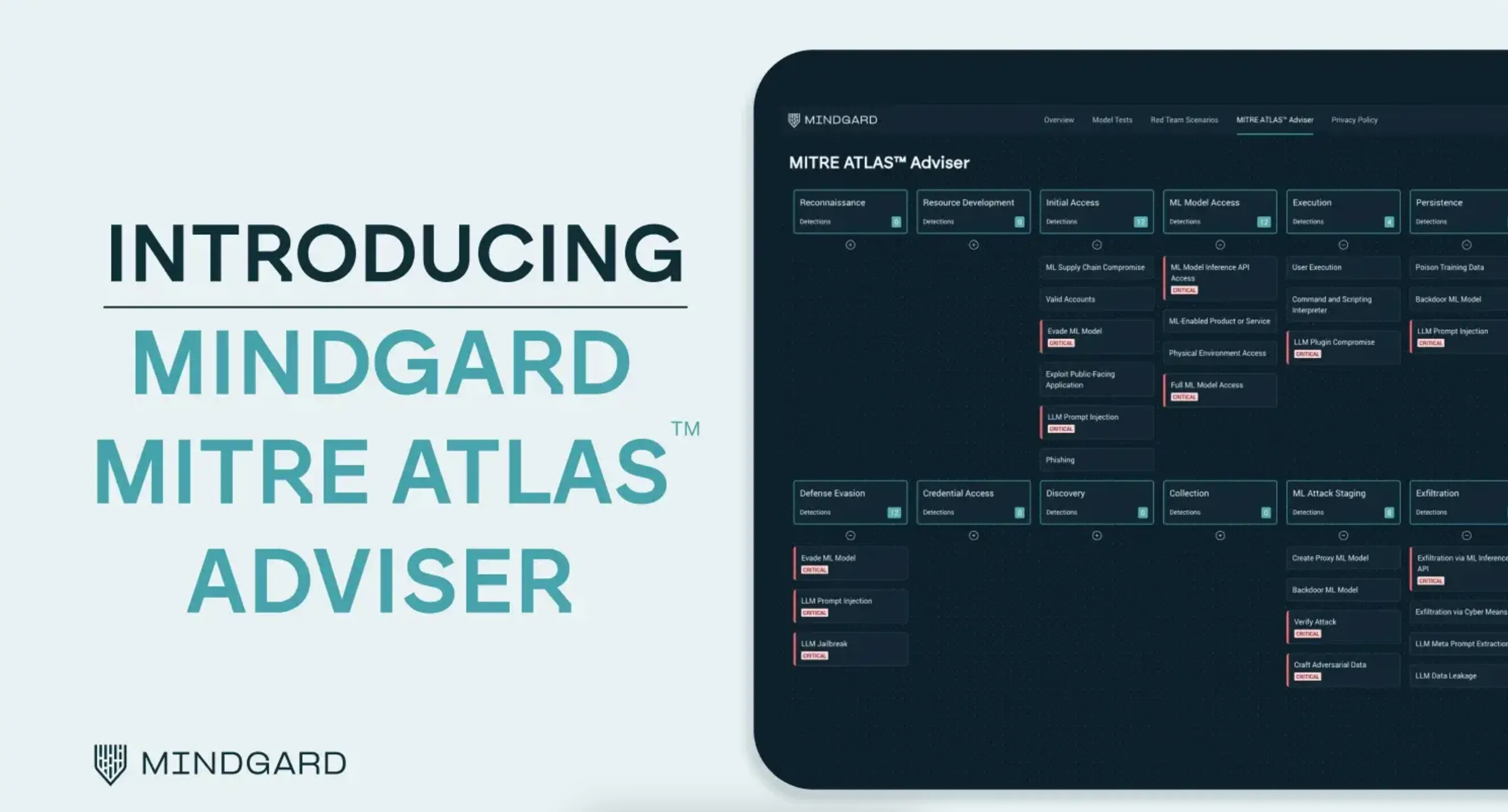

To overcome this challenge, we are thrilled to announce a new feature called MITRE ATLAS™ Adviser in Mindgard that helps standardise AI red teaming reporting. Our goal is to establish consistent practices for systematic red teaming, enabling enterprises to effectively manage today's risks and prepare for future threats as AI capabilities continue to evolve. Please find a sneak peek of the UI below:

We have aligned Mindgard’s attack library with the ATLAS™ framework to enable enterprise teams focused on AI safety and security, including Responsible AI, AI Red Team, Offensive Security, Penetration Testing, Security Architecture, and Security Engineering to achieve the following:

As of today, Mindgard can standardise reporting of adversary techniques across the 14 tactics targeting AI systems, as shown below:

MITRE is a not-for-profit organisation that maintains Adversarial Threat Landscape for Artificial-Intelligence Systems (ATLAS™), which is a curated knowledge base of adversary tactics and techniques against Al, observed with the help of Al red teams and security groups. Like the heavily adopted MITRE ATT&CK® framework, ATLAS™ focuses on enhancing the understanding of adversarial tactics but is specifically tuned to address vulnerabilities within AI systems. In the process, MITRE has compiled case studies of real-world exercises and incidents against AI to highlight the impact of this new risk we are facing.

To give you a sense of how extensive this body of work is, there are a total of 56 techniques covered by ATLAS™ framework across 14 tactics (with a new one called ML Model Access) including a few adopted from ATT&CK® framework to support the defence efforts of enterprise security teams. This covers the entire AI attack chain from Reconnaissance to Impact, and includes AI assets such as model, software, hardware, and data. At Mindgard, we are big fans of their work, so we decided to adopt ATLAS in our product – thank you MITRE for helping standardise AI security! You can see Mindgard’s MITRE ATLAS™ dashboard in action below:

At Mindgard, we are building an automated red teaming platform to empower enterprise security teams to continuously attest, assure, and secure AI systems. With Mindgard, you can red team popular models hosted by us, or test your custom model with the ability to run automated tests or specific attack scenarios on-demand, generate quantified risk reports, and gain insights into model risk landscapes. All you need is an inference endpoint!

Additionally, Mindgard provides remediation advice to help you plan and strengthen your AI defences. What's more, Mindgard enables multimodal AI red teaming, making it applicable to both traditional AI and Generative AI (GenAI) models. With one of the most advanced AI attack libraries including the original attacks developed by our stellar R&D team, we cover impacts on privacy, integrity, abuse, and availability of AI systems.

You can try our free demo now to explore various scenarios and run red teaming tests on AI models. Plus, integrate Mindgard with your MLOps pipeline to set pass/fail thresholds and manage risky AI models - ensuring the integrity of your AI-powered systems.

Through our intuitive interface, you can test your models against the following risks in AI systems tracked by OWASP Top 10 for LLMs:

1. Prompt Injection

2. Training Data Poisoning

3. Model Denial of Service

4. Sensitive Information Disclosure

5. Excessive Agency

6. Overreliance

7. Model Theft

By adopting standardized practices for AI red teaming, we can collectively improve the safety and security of AI systems. Stay ahead of the curve with Mindgard’s MITRE ATLAS Adviser – book a demo here to learn more about how Mindgard enables your enterprise to attest, assure, and secure your AI!