Identifying vulnerabilities and flagging behaviors is only the beginning. Mindgard offers context-driven remediation tools that learn as threats evolve.

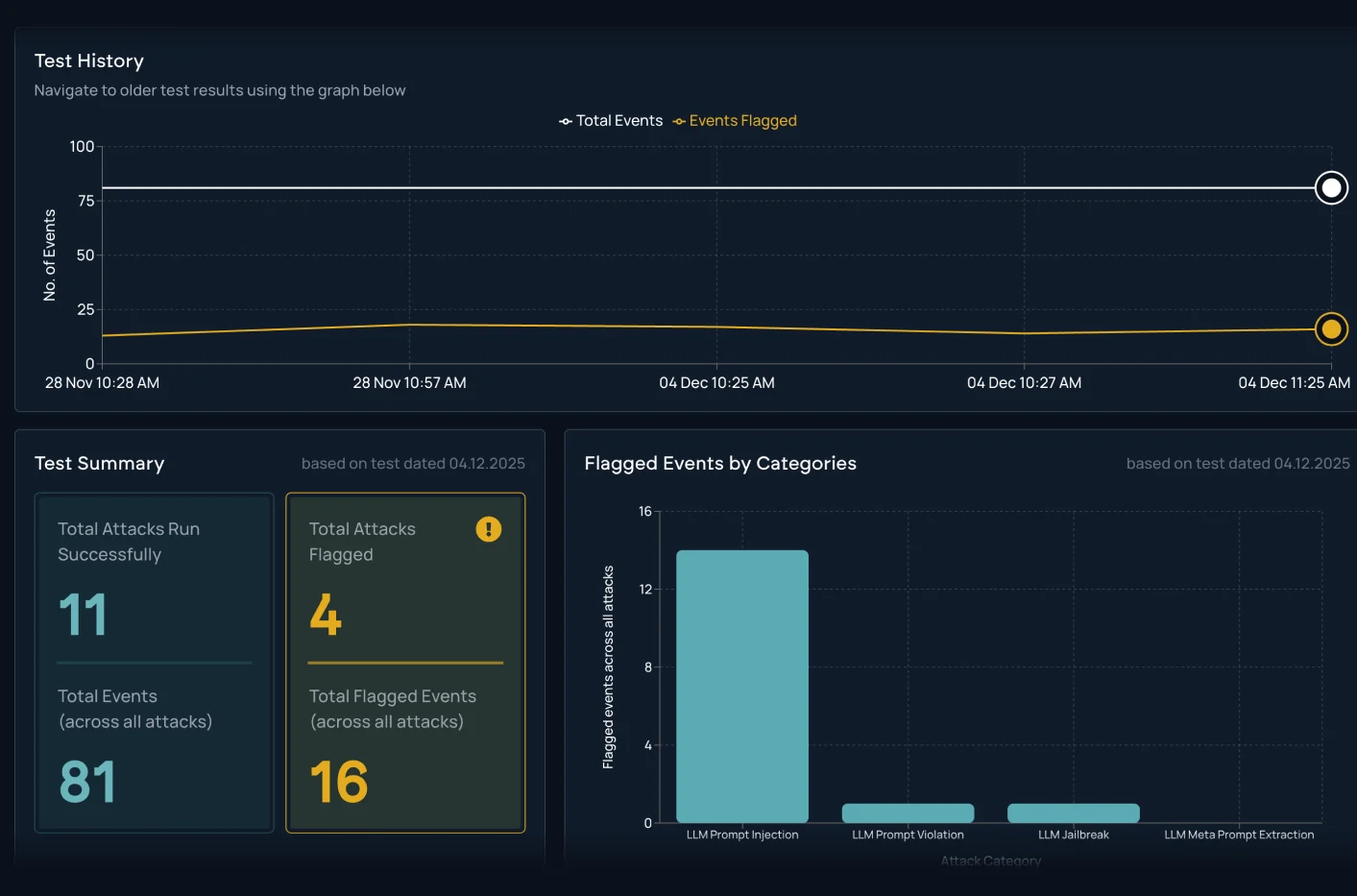

Continuously test AI systems using real system data and the industry’s most advanced AI safety datasets and attack libraries.

Mindgard’s enterprise-grade platform captures AI system architecture, security policies, and industry standards to help teams fix AI security vulnerabilities, harden system prompts against attacker manipulation, apply context-relevant remediation guidance, and adapt security practices as AI threat models and regulations evolve.

The Mindgard Platform unifies intelligence across models, defenses, and capabilities to expose relevant threats and guide teams toward effective remediation. Configuration evaluation retrieves system prompts and key system data to identify weak spots and connect risk signals across the AI environment.

Simulate real attacker behavior to test for prompt injection, guardrail gaps and unsafe tool use. Harden and validate defenses with clear evidence and remediation guidance.

Mindgard combines security and safety risk analysis to uncover exploitable vulnerabilities and safety-related threats. Using these findings, the platform emulates adversary behavior to simulate high-risk scenarios and reveal where AI systems are most exposed.

Whether you're just getting started with AI Security Testing or looking to deepen your expertise, our engaging content is here to support you every step of the way.