What is AI Data Security? How AI Detects Threats & Safeguards Sensitive Data

In This Article

AI data security is the practice of safeguarding both the data that powers AI and machine learning systems and the AI models themselves. It applies across the entire AI system lifecycle.

That AI system lifecycle covers collection, training, fine-tuning, inference and deployment. It defends against tampering, theft, leakage and adversarial manipulation. The AI system lifecycle also combines traditional data-protection methods such as encryption, role-based access, data classification, masking and DLP with AI-specific controls such as data provenance, prompt filtering, output sanitization, model behavior monitoring and continuous AI red teaming.

These approaches are set out in the NIST AI Risk Management Framework, the OWASP Top 10 for LLM Applications (2025) and the CISA / NSA Joint Cybersecurity Information on AI Data Security (May 22, 2025). The threats AI data security defends against include data poisoning, prompt injection, model inversion, training-data leakage, model theft and shadow AI exposure. None of these threats respond to legacy security tooling on its own.

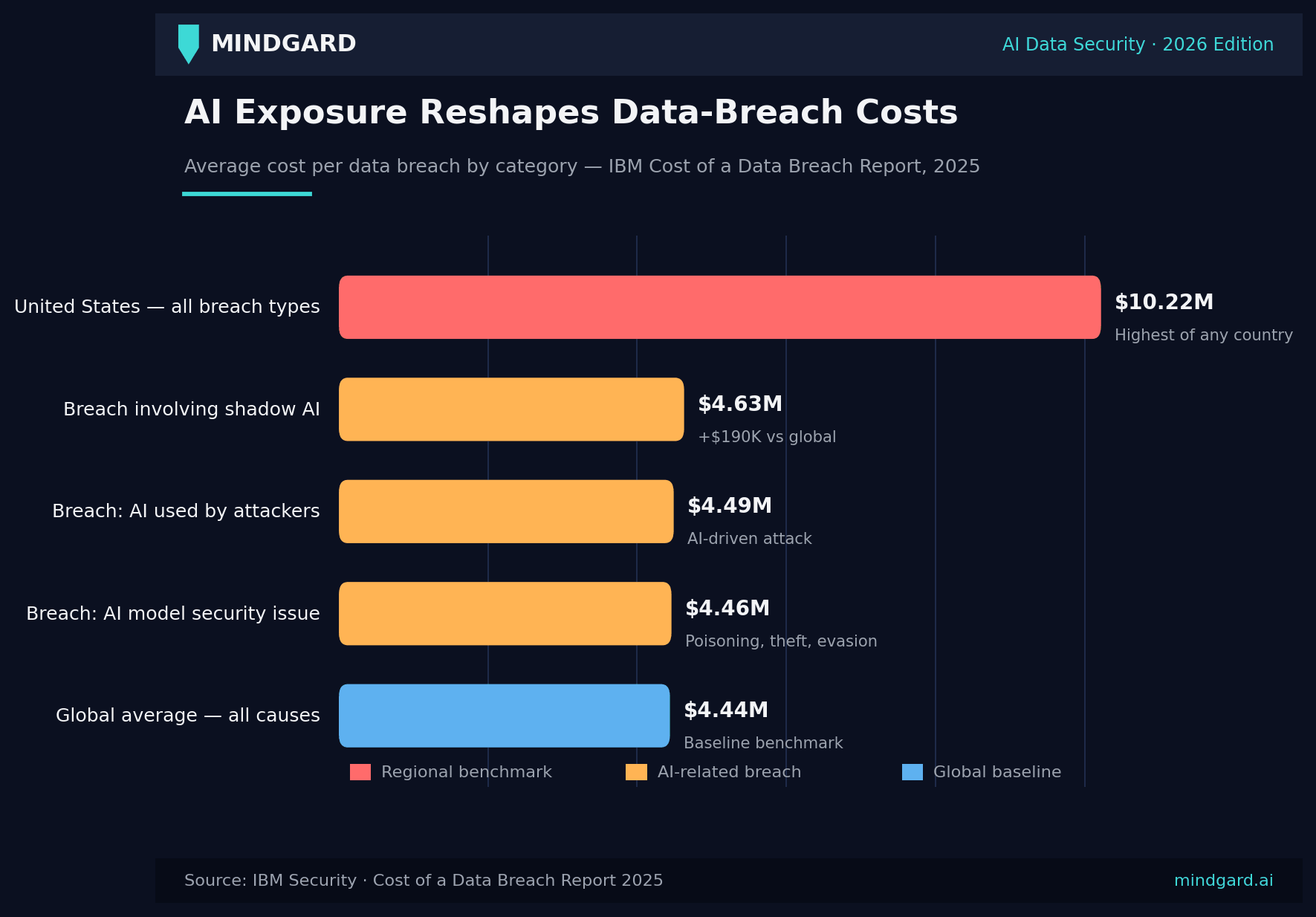

The stakes are now measurable. IBM's 2025 Cost of a Data Breach Report found that 13 percent of organizations were breached through their AI models or applications in 2025. 97 percent of those breached organizations had no AI access controls in place.

Breaches involving shadow AI cost $4.63 million on average. That figure sits $190,000 above the $4.44 million global baseline.

Key Takeaways

- Definition. AI data security is the practice of protecting both the data inside AI systems and the AI models themselves across collection, training, inference and deployment. It combines traditional data-protection controls with AI-specific controls such as continuous AI red teaming.

- Threats. The six AI-specific threats every program must cover are data poisoning, prompt injection, model inversion, training-data leakage, model theft and shadow AI exposure. None of these threats are stopped by legacy security tooling on its own.

- Frameworks. Three authoritative frameworks anchor AI data security in 2026. They are the NIST AI Risk Management Framework, the OWASP Top 10 for LLM Applications (2025) and the CISA / NSA Joint Cybersecurity Information on AI Data Security (May 2025).

- Cost. Breaches involving shadow AI cost $4.63 million on average. That figure sits $190,000 above the $4.44 million global baseline. 97 percent of organizations breached through their AI models or applications in 2025 had no AI access controls in place, per IBM, 2025.

- Action. Continuous AI red teaming is the single control that surfaces these gaps before attackers do. It is recommended in both the NIST AI RMF and Article 15 of the EU AI Act for high-risk AI systems. It is the core of the Mindgard platform.

AI Data Security Threats: The 12 You Must Cover

The table above lists every AI-specific threat enterprises face in 2026, mapped to the AI surface it attacks, severity and primary defensive control. Three threats deserve closer attention because they are the most cited and the most damaging.

Data poisoning

In data poisoning attacks, the adversary inserts crafted examples into training data, fine-tuning data or retrieval corpora used by RAG models. Data poisoning aims to affect what the model has learned.

Recent research conducted in 2024 and 2025 demonstrated that only 0.1% poisoning of training data could change the model's behavior significantly. In addition, once compromised, a model continues functioning normally but producing biased or manipulated results. Prevention measures against data poisoning include data provenance, dataset signing and version control.

Prompt injection

Prompt injection happens when user input or content extracted from another source contains instructions for the model that bypass the limitations set in its system prompt. As a result, the model ignores its guardrails, leaks data or performs unauthorized actions. Prompt injection can be direct or indirect.

For indirect prompt injection, an adversary embeds instructions into a document, email, webpage or a database entry. As the model trusts information provided by its own pipeline, it follows the instructions. Mitigation strategies include system prompt isolation and sanitization of content from untrusted sources, structured input/output format and gated tool calls.

Model inversion

Model inversion is a privacy threat in which an adversary uses specially selected queries to deduce some parts of the model's training data. Model inversion exploits the fact that models carry signatures of their training datasets. PII, medical or proprietary data pose the most threat to the model due to being especially sensitive.

What Is AI Data Security?

AI data security is the practice of protecting both the data that fuels AI systems and the AI systems themselves across the machine-learning lifecycle. That lifecycle covers collection, preprocessing, training, fine-tuning, inference and decommissioning. The definition aligns with the CISA / NSA Joint Cybersecurity Information on AI Data Security published May 22, 2025.

"Data security is a critical enabler that spans all phases of the AI system lifecycle. Successful data management strategies must ensure that the data has not been tampered with at any point throughout the entire AI system lifecycle. The data must be free from malicious, unwanted and unauthorized content. It must not have unintentional duplicative or anomalous information."

Source: CISA, NSA, FBI, Australian Signals Directorate, UK NCSC and New Zealand GCSB. Joint Cybersecurity Information: AI Data Security, May 22, 2025.

AI Data Security vs. AI Security vs. Data Security

These three terms get used interchangeably. They are not the same.

AI data security sits at the intersection. It treats training data, prompts, embeddings, model weights and outputs as security-sensitive assets in their own right.

Why Traditional Security Methods Fall Short

Traditional approaches to data security depended on manual effort and predefined rules. Firewalls, signature-based detection and static access permissions cannot keep up with zero-day exploits, insider threats or the new class of attacks targeting AI systems.

AI data security extends traditional cybersecurity with machine learning, real-time anomaly detection and continuous automated red teaming. That last control is specifically recommended by the NIST AI Risk Management Framework's MEASURE function. It is also required for high-risk AI systems under Article 15 of the EU AI Act. The result is a control set that adapts as fast as the attack surface.

Frameworks That Govern AI Data Security

Four authoritative frameworks define what an AI data security program should look like in 2026.

NIST AI Risk Management Framework

The NIST AI Risk Management Framework is the U.S. National Institute of Standards and Technology's reference for managing AI risk. It is organized around four functions.

- GOVERN. Establish policies, accountability and an AI governance program.

- MAP. Identify context, intended use and risk exposure.

- MEASURE. Test, monitor and red-team the system.

- MANAGE. Respond to incidents, retire models and continuously improve.

IBM's 2025 study found that 63 percent of breached organizations either had no AI governance policy in place or were still drafting one. The GOVERN function exists to close that gap.

OWASP Top 10 for LLM Applications (2025)

The OWASP Top 10 for LLM Applications (2025) is the de facto industry list of the most critical risks in large language model deployments. The 2025 edition includes prompt injection (LLM01), insecure output handling (LLM05), training data leakage (LLM06), excessive agency (LLM08) and model theft (LLM10).

CISA / NSA Joint Cybersecurity Information on AI Data Security

The CISA / NSA paper published May 22, 2025 was the first multi-agency multi-country guidance focused specifically on data security in AI systems. It is co-signed by U.S. CISA and NSA, the FBI, the Australian Signals Directorate, the UK NCSC and New Zealand's GCSB. The paper calls for cryptographic protection of training datasets and model weights at rest and in transit. It also calls for digital signatures on training data so any tampering becomes detectable.

EU AI Act Article 15

Article 15 of the EU AI Act requires that high-risk AI systems be designed and developed to be resilient against attempts to alter their use, behavior or performance by exploiting their vulnerabilities. The Article specifically calls out data poisoning and adversarial examples as attacks that high-risk systems must resist. Continuous red teaming is the established way to demonstrate that resilience.

Real-World Breach Costs and the Business Case

IBM's 2025 Cost of a Data Breach Report makes the financial case for AI data security in four numbers.

- The global average cost of a data breach is $4.44 million.

- The U.S. average is $10.22 million, the highest of any country.

- The average for breaches involving shadow AI is $4.63 million. That figure sits $190,000 above the global baseline.

- The average for breaches tied to AI model security issues such as poisoning or theft is $4.46 million.

A second set of numbers explains the gap. 13 percent of organizations reported a breach involving their AI models or applications in 2025. An additional 8 percent admitted they did not know whether they had been breached through AI. Of the confirmed AI breaches, 97 percent of organizations had no AI access controls in place. 60 percent of AI-related security incidents led to compromised data. 31 percent led to operational disruption.

Organizations that used AI-powered defenses extensively shortened their breach lifecycle by an average of 80 days and saved $1.9 million versus those with no AI defense. That was the single largest cost-reducing factor in the study.

Shadow AI: Why Ungoverned Adoption Costs $670K More Per Breach

Shadow AI refers to the use of AI tools and services without the knowledge or approval of the organization's security and governance teams. Common examples include employees pasting customer data into a consumer chatbot, developers integrating an undocumented LLM API into a production service and product teams shipping AI features without a model security review.

IBM's 2025 study found that organizations with high levels of shadow AI saw $670,000 in additional breach costs compared with organizations that had low or no shadow AI. The cause is straightforward. Shadow AI tools sit outside DLP, IAM, logging and incident-response coverage. When something goes wrong the security team often does not know the tool exists.

Mitigation involves three controls working together.

- A live inventory of every AI system, model, agent and integration in use across the organization.

- A sanctioned AI gateway that routes employee AI usage through approved tools with full logging and DLP coverage.

- An employee policy that defines what data may be shared with AI tools and what may not.

Core Components of AI Data Security

AI data security involves:

- Threat detection: Machine learning detection models flag the unusual login times, lateral movement and bulk data exfiltration that traditional rule-based SIEMs miss. The same models can flag AI-specific signals such as anomalous embedding lookups against vector databases, unusual tool-call sequences from agents and prompts that score high for jailbreak intent.

- Access control: AI systems classify sensitive data and enforce role-based access control, multi-factor authentication and context-aware policies. This control matters more than any other for AI systems specifically. 97 percent of organizations that suffered an AI model or application breach in 2025 had no AI access controls in place.

- Encryption and data protection: AI classifies sensitive data and applies field-level encryption, tokenization or masking based on sensitivity score. The CISA / NSA paper specifically calls for cryptographic protection of training datasets and model weights at rest and in transit. It also calls for digital signatures on training data so any tampering becomes detectable.

It’s important to note that AI model security can also defend large language models (LLMs) against adversarial attacks such as model inversion attacks, data poisoning, and bias. Addressing these risks is essential for effective AI data security and for building safe, compliant AI systems.

How Is AI Used in Data Security?

Most enterprise AI data security programs use AI for four operational tasks.

- Real-time monitoring. AI continuously analyzes logs, endpoints, network traffic and increasingly model behavior in production. Runtime AI security tools detect drift, prompt-injection attempts, jailbreaks and data-exfiltration patterns from agent tool calls. They then trigger SIEM rules or block the action. Mindgard's AI Runtime Protection is one example of this class.

- Anomaly detection. AI baselines normal behavior across users, hosts and AI agents. It flags outliers in real time. The most valuable signals for AI data security specifically include unusual embedding lookups against vector databases, anomalous tool-call sequences from agents and prompts that score high for jailbreak intent.

- Data loss prevention. AI scans and classifies sensitive data at rest and in motion. It applies encryption or access restrictions automatically when sensitive content is detected leaving its expected boundary.

- Insider-threat detection. AI profiles user behavior over time. It can spot subtle indicators of compromised credentials or malicious insiders even when the activity mimics normal usage patterns.

How to Implement AI Data Security: An Enterprise Checklist

Use this ten-step checklist to operationalize a program. The steps are grouped by NIST AI RMF function so the list doubles as a maturity model.

1. GOVERN

- Assign an executive owner for AI security. Document accountability for AI model approval and incident response.

- Build a live inventory of every AI system in use across the organization. Cover internal tools, vendor products, shadow usage, pilots and embedded features inside existing platforms.

2. MAP

- Classify each AI system by use case and risk. Map data flows in and out of each system.

- Conduct an AI security assessment of every high-risk system. Mindgard's AI Assessment is one option.

3. MEASURE

- Stand up continuous AI red teaming against production systems. Test for the OWASP LLM Top 10 risks at a minimum.

- Add runtime monitoring for prompt injection, jailbreak attempts and abnormal tool-call patterns.

4. MANAGE

- Wire AI findings into existing SIEM and ticketing workflows so they appear alongside traditional security alerts.

- Build incident-response playbooks specific to AI scenarios such as a poisoned model, a leaked system prompt or a compromised agent.

- Apply DLP, RBAC and MFA to every AI access point. That includes model APIs, fine-tuning jobs and vector database queries.

- Review and update the program every quarter as new threats emerge and as the organization deploys new AI capabilities.

Securing RAG and Agentic AI Pipelines

Retrieval-augmented generation and agentic AI extend the attack surface beyond the model itself. Every document the agent retrieves is a potential indirect prompt-injection vector. Every tool the agent can call is a potential excessive-agency risk. Every embedding in the vector database is a potential model-inversion target.

Securing these pipelines requires four controls.

- Document provenance. Track where every document in the retrieval corpus came from. Sign sources so tampering becomes detectable.

- Tool-call gating. Require human approval for any tool call that has a real-world side effect such as sending email, executing code or moving money.

- Agent red teaming. Run continuous adversarial tests against the agent's full pipeline, not just the underlying model.

- Observability. Log every retrieval, every tool call and every output. Send the logs to a SIEM with rules tuned for AI-specific anomalies.

"Traditional penetration testing assumes a fixed attack surface. AI systems do not have one. Every new prompt, every new tool the agent is given and every new document in the RAG index expands the surface. The only way to keep up is continuous automated red teaming that runs against the production system, not a one-off audit." - Dr. Peter Garraghan, CEO and CTO, Mindgard.

Regulatory and Compliance Landscape

Four regulations now shape AI data security obligations.

- EU AI Act. Article 15 requires resilience against data poisoning and adversarial attacks for high-risk systems. Penalties can reach 7 percent of global annual turnover.

- GDPR. Applies whenever an AI system processes personal data. Data minimization, lawful basis and the right to object to automated decisions all extend to AI.

- HIPAA. Healthcare organizations using AI to process protected health information must apply the same safeguards required for any other ePHI system. Vendor risk management of AI providers is now table stakes.

SOC 2. AI-specific controls are increasingly required under SOC 2 Type II audits, particularly under the Common Criteria sections on access control and risk management.

Keeping Data Safe in an AI-Driven World

AI is transforming data security from a reactive, manual effort into a proactive, intelligent system capable of evolving alongside threats. By automating threat detection, streamlining responses, and enhancing visibility across complex environments, AI empowers organizations to stay ahead of attackers.

Organizations that embrace AI-powered security gain stronger protection and the agility to respond to whatever comes next.

Mindgard’s advanced Offensive Security solution enables organizations to create and run secure AI platforms. Discover how Mindgard can help you stay ahead of evolving risks: Book a demo today.

Frequently Asked Questions

Can AI data security tools integrate with existing cybersecurity systems?

Yes. Modern AI data security tools integrate with SIEM platforms such as Splunk, Sentinel and Chronicle. They also integrate with SOAR, EDR/XDR, IAM, CSPM and DLP tools via OpenAPI, webhook or native connectors. Findings from AI red teaming and runtime protection appear alongside traditional findings in the same workflow.

How does AI handle encrypted data in threat detection?

AI cannot directly analyze encrypted data content. It can detect suspicious patterns in metadata, access logs and user behavior associated with encrypted files. Examples include unusual download patterns or access from unknown devices.

Are AI security systems vulnerable to attacks themselves?

Yes. AI systems face threats traditional software does not. The OWASP Top 10 for LLM Applications (2025) enumerates ten of them, including prompt injection, training data leakage and excessive agency. Defense requires data provenance, model integrity verification, query rate limits, output validation and regular red-team testing.

What is data poisoning and how does it affect AI?

Data poisoning is an attack where an adversary inserts crafted examples into training, fine-tuning or RAG data to change a model's behavior. Research shows that replacing as little as 0.1 percent of training data with carefully crafted misinformation can significantly increase a model's rate of harmful or incorrect outputs.

How does AI data security differ from traditional cybersecurity?

AI data security extends traditional cybersecurity with machine-learning anomaly detection, automated response and continuous red teaming of the model and pipeline. It also protects AI-specific assets such as training data, model weights, embeddings and agent tools that traditional cybersecurity does not address.

How much does an AI-related data breach cost?

IBM's 2025 Cost of a Data Breach Report puts the global average at $4.44 million. The United States average is $10.22 million. The average for breaches involving shadow AI is $4.63 million, which is $670,000 higher than for organizations with little or no shadow AI exposure.

What frameworks should an AI data security program follow?

The four most commonly used are the NIST AI Risk Management Framework, the OWASP Top 10 for LLM Applications (2025), the CISA / NSA Joint Cybersecurity Information on AI Data Security (May 22, 2025) and Article 15 of the EU AI Act for high-risk AI systems operating in or selling into the EU.