31 Best AI Red Teaming Tools: Compare Enterprise, Open-Source & Research Tools

In This Article

Companies scrambled to secure their rapidly expanding and evolving AI deployments, pushing worldwide AI security spending to $25.53 billion in 2026, per MarketsandMarkets. With an expected compound annual growth rate (CAGR) of 14.8%, the AI security market is expected to reach $50.83 billion by 2031. This growth indicates that organizations are shifting away from applying security controls after-the-fact to integrating proactive security testing practices such as AI red teaming.

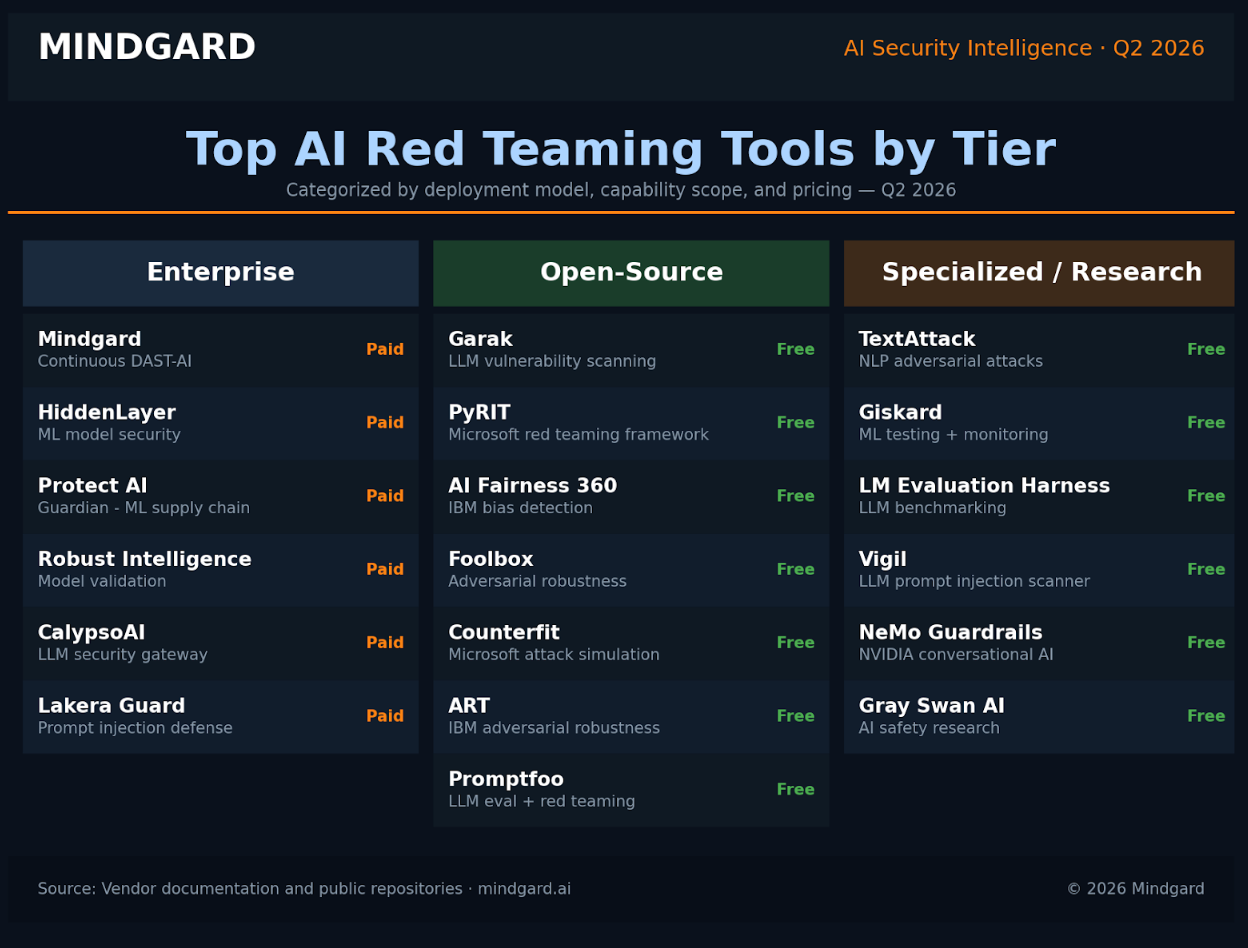

In this guide, we provide an overview of 31 different AI red teaming tools organized into enterprise, open-source, and research tiers. For each tool, we outline pricing, key capabilities, and the scenarios each tool is best suited for. Use the interactive table below to filter by tier, search by name, or sort by category.

What Are AI Red Teaming Tools?

AI red teaming tools allow security teams and researchers to stress-test AI systems by simulating the attacks and misuse that real-world adversaries would carry out.

In other words, rather than waiting for bad behavior to occur with an AI system in production, you can use AI red teaming tools to proactively discover the failure modes, vulnerabilities and unsafe behaviors that are most likely to be exploited by adversaries. Organizations use the findings from these red teaming tools to improve their overall security posture, before those issues become a real problem for AI systems.

It's easy to confuse AI red teaming with other forms of model evaluation or standard software penetration testing, but the difference is that AI red teaming tools are built to mimic the sophisticated, creative ways adversaries actually try to subvert AI systems. Most tools in this space share a few common capabilities:

- Adversarial prompt generation: The process of automatically generating prompts designed to elicit harmful, biased, or unintended responses from AI systems. This is the most foundational capability in the AI red teaming space.

- Jailbreak and safety bypass testing: Testing whether an AI system's safety guardrails can be bypassed using multi-turn manipulation attempts or indirect prompt injection.

- Data extraction and privacy testing: Prompt injections intended to get the AI to reveal information from its training data or other information that it shouldn’t have.

- Agentic attack simulation: AI agents will become more widespread, so red teaming tools are beginning to evaluate how an agent behaves if fed malicious inputs or placed in an adversarial environment.

- Bias and toxicity probing: AI red teaming tools can also be used outside of security contexts to determine whether a model generates biased, toxic, or otherwise unwanted outputs across diverse inputs.

There are nuances to each AI red teaming tool, but they all aim to do the same thing: allow your team to see how your AI actually behaves when put under pressure by someone trying to break it.

Already have safety mechanisms built into your models and applications? An AI red teaming tool can help you determine if those safety features actually work, or if there’s a way for a motivated attacker to bypass them.

AI Red Teaming vs. AI Penetration Testing vs. AI Security Scanning

These terms are often used interchangeably. They mean different things, attack different layers, and help solve different problems. However, many folks confuse them, and that’s where your security program begins to fall short.

- AI red teaming attempts to think and act like an adversary. We try to model how an attacker in the real world would attack your system. Instead of penetrating a single layer (model, prompt, etc.) we test how the system performs when under attack. We test models + prompts, downstream integrations, user input sanitization, etc. By combining these elements, we’re trying to find unknown risks, potential misuse cases, and system failures that arise when everything is functioning exactly how it does in production.

- AI penetration testing is scoped to identify and validate vulnerabilities. A red team will try to break anything they can, but pen testers prove vulnerabilities within a scoped endpoint (model endpoint, API, feature, etc.). Through technical review and standardized attack test cases, we take known exploitation techniques and prove vulnerabilities exist by providing actionable evidence (proof of exploit). Vulnerabilities are typically triaged based on these results.

- AI security scanning looks for known vulnerabilities. AI security scanners analyze endpoints, heuristics, and signatures to detect risk. Scanners tend to have broader coverage due to their limited scope. This makes them well suited to be run regularly to catch issues as soon as possible (i.e., within your CI/CD pipeline). They aren’t designed to develop new attacks or identify failure cases that could occur naturally.

AI security scanners have broad reach, while AI pentesting can tell you where you're vulnerable and may need to shore up your security. AI red teaming can demonstrate how an attacker could leverage vulnerabilities for maximum impact.

How to Choose an AI Red Teaming Tool

AI red teaming tools are excellent for adding rigor and repeatability to a process that can otherwise be fairly ad hoc. However, like any area of software security, there are a lot of tools to choose from, and not all solutions will work for your use cases. Here are some things you should think about before purchasing an AI red teaming platform.

- Consider your threat model first: What are you securing? A customer-facing chatbot? An internal code assistant? A fully deployed autonomous agent? The answers to these questions will inform which tool is best for you.

- Look for how attacks are created: Adversarial attack generation shouldn’t be limited to a curated list of past jailbreak prompts. Seek out a platform that offers model-assisted attack generation so that your security testing can keep up with advances in adversarial tactics.

- Think about compliance and documentation: How will your red team tool handle sensitive information about your model, training process, or underlying system prompts? Documentation and logging are critical if you’re operating in a regulated industry.

- Look at model and framework compatibility: How easily can your system be integrated with the models and LLM frameworks you use? Integration capabilities can vary widely between teams using proprietary models versus open source models or different deployment frameworks like LangChain or Hugging Face.

- Examine reporting options: Once you’ve discovered a vulnerability, who needs to know about it and how will you tell them? The best AI red teaming tools make it easy to document findings and route them to appropriate internal teams.

- Know your price point: AI red teaming tools range from open source and free to $10k-$100k+ annual contracts for enterprise solutions. Know what you’re getting for your money.

AI Red Teaming Tool Selection Matrix

Run through this AI red teaming tool evaluation grid as a framework for comparing AI red teaming tools based on maturity and fit.

AI red teaming tools are quickly becoming a standard part of responsible AI development. There are numerous tools to choose from so evaluate a few before deciding. Here are some of the best AI red teaming tools to help you get started.

We’ve identified examples of the best tools to red team your AI systems for various use cases, including:

- Mindgard: Great for Hands-on Expertise

- Garak: Great for AI Vulnerability Testing

- PyRIT: Great for Red Teaming AI Supply Chains

- AI Fairness 360: Great for Mitigating Bias

- Foolbox: Great for Neural Networks

- Meerkat: Great for Unstructured Data

- Granica: Great for Safeguarding LLM Data

Methodology: How We Selected These Tools

Before we get into the details of each platform, a few notes on our methodology here. While there are lots of useful prompt testing tools out there (and we’ll likely see more of those as time goes on), this list is biased towards tools that help with real-world AI red teaming exercises.

The platforms below should help teams test realistic attack scenarios like prompt injection, data leak retrieval, logical failure cases, jailbreak tests, etc.

With that said, we first compiled a list of tools that fulfilled as many of our criteria as possible. We prioritized tools that allowed teams to run continuous, automated tests and that could be plugged into CI/CD pipelines.

We also favored tools that can scale (handle large volumes of tests), have access to API/access to plugins for different LLM providers, and can fit into your existing security workflow/applications.

The Role of AI Red Teaming in Regulatory Compliance

Governments and standards bodies have made red teaming AI systems a central part of compliance. With evolving AI safety standards, there's a clear expectation that organizations will demonstrate that their systems have been tested against real-world adversarial attack simulations.

Your AI red teaming efforts can help validate your compliance by documenting that your models were tested for potential risks, misuse, and failure modes prior to deployment. Red teaming your AI models maps perfectly to these new regulations and governance initiatives focused on transparency, accountability, and managing risk throughout the AI lifecycle.

Standards and regulations focused on governing AI typically mandate extensive risk identification, adversarial validation, and testing exercises that AI red teaming makes possible.

- NIST AI Risk Management Framework (AI RMF): This voluntary framework helps organizations identify, quantify, and reduce risks across the AI system lifecycle. AI red teaming activities map to the frameworks Measure function by thoroughly testing your AI models with risk scenarios.

- ISO/IEC 42001: This standard is the first certifiable AI management system standard. The standard provides direction for governing AI risks, implementing controls, and responsibly using AI technology. Testing your AI with red teaming exercises can provide assurance that your risk controls are effective.

- ISO/IEC 23894: This standard is focused specifically on managing risks presented by AI. This includes identifying, analyzing, evaluating, and mitigating AI risks. AI red teaming can help this process by identifying realistic failure modes that can be added to your risk assessments.

- OWASP Top 10 for LLMs: The OWASP Foundation has created security baselines for generative AI applications. Many of the risks highlighted in this guidance (such as prompt injection and data leakage) are typical targets for AI red teamers.

- EU AI Act: Adopted in 2024, the EU AI Act requires high-risk AI systems to adequately test and evaluate their solutions prior to release. Testing includes experimenting with the AI system using inputs that are likely to cause failure (adversarial inputs).

These frameworks provide structure, but AI red teaming is an essential step to validate the effectiveness of your AI controls.

AI Red Teaming Compliance Checklist

Jumpstart your search by checking out these examples of some of the best tools for red teaming AI systems.

Top AI Red Teaming Tool Comparisons (2026)

Open Source vs. Enterprise: When to Choose Each

Open source AI red teaming solutions can be great if you value flexibility and don’t need to make a large investment upfront. However, open source tools require a lot of time and resources from your team. Because of this, open source often proves to be a deceptive bargain when you consider total cost of ownership (TCO).

With open source AI red teaming tools, “free” comes as the cost of time spent building out your stack. You’ll need to maintain your own infrastructure, run attack generation yourself, and format your own standard reports. This isn’t an issue if you have staff with bandwidth and aren’t under pressure to demonstrate compliance, but it will almost certainly lead to longer response times.

Enterprise AI red teaming solutions solve these problems by offering managed services. These tools have a higher price point but save you time on overhead and provide you with managed infrastructure, built-in attack generation, and standardized reporting. Enterprise solutions also offer support and continuous product improvements. When you run with an open source tool, you’re on your own. Vendors that offer enterprise plans include SLAs and product roadmaps. These are important if your red teaming workflows have any association with production risk or regulatory compliance.

When it comes to features, enterprise AI red teaming offerings generally deliver more value. Open source tools will generally only support one or two types of tests. Enterprise AI red teaming tools enable you to run full-spectrum tests against your application. That means coverage that spans beyond injection attacks to agent-based attacks and everything in between, including integrations with LangChain, Hugging Face, and more. If your organization values quick turnarounds, scalability, and auditable processes, an enterprise solution is the way to go.

Mindgard: Best for Continuous Automated Red Teaming

Artificial intelligence (AI) is a tremendous asset to your organization, and malicious actors want privileged access to this valuable resource. Mindgard’s DAST-AI platform automates red teaming at every stage of the AI lifecycle, supporting end-to-end security.

Thanks to its continuous security testing and automated AI red teaming, our solution is one of the best tools for red teaming. For more hands-on assistance, Mindgard also offers AI red teaming services and artifact scanning. Check out this video to learn more:

Schedule your Mindgard demo now to automatically build a more resilient cyber infrastructure.

Key features:

- Automated AI red teaming

- Red teaming services

- End-to-end red teaming for AI

Garak: Great for AI Vulnerability Testing

GARAK is an open-source LLM vulnerability scanner maintained by NVIDIA. It can be used by red teams to scan for common vulnerabilities in AI models such as data leakage and misinformation.

It also automatically generates attacks against AI models to test how well they perform in different threat scenarios.

Key features:

- Probe for weaknesses such as misinformation, toxicity generation, jailbreaks, and more

- Connect to LLMs such as ChatGPT

- Automatically scan AI models for vulnerabilities

PyRIT: Great for Red Teaming AI Supply Chains

The Python Risk Identification Toolkit is part of Microsoft’s AI red team exercise toolkit. As the name implies, PyRIT is a Python toolkit for assessing AI security, and it can be used to stress test machine learning models or manage adversarial inputs.

It’s an incredibly robust solution—in fact, Microsoft uses it to test its generative AI systems, such as Copilot.

Key features:

- Easily identify harm categories

- Open-source software

- Created and managed by Microsoft

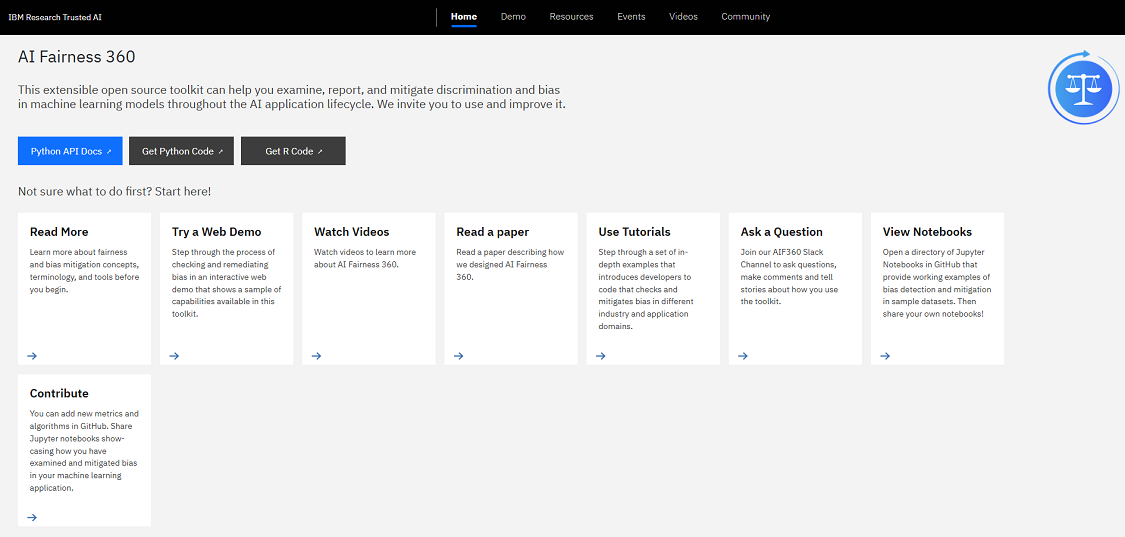

AI Fairness 360: Great for Mitigating Bias

IBM’s open-source toolkit for testing machine learning models is called AIF360. It allows you to detect vulnerabilities and mitigate discrimination and bias in machine learning models.

This red teaming tool can be used in any industry where fairness and equity are critical, such as finance or health care. Outside of testing for bias, AIF360 comes with dataset metrics, bias testing models, and bias-mitigation algorithms.

Key features:

- Bias testing models

- Dataset metrics

- Algorithms for mitigating bias

Foolbox: Great for Neural Networks

Foolbox attempts to deceive neural networks by generating adversarial examples. This lets programmers know where their model falls short so they can build better defenses in the future.

Foolbox includes a library of decision-based attacks that can attack state-of-the-art neural networks.

Key features:

- Type annotations help you catch bugs

- Library of adversarial attacks

- Batch support

Meerkat: Great for Unstructured Data

Datasets power AI and ML models. Visualize your data using Meerkat’s open-source interactive datasets.

Written in Python, this library can assist with preprocessing unstructured data for use in ML models. Easily preprocess images, text, audio, and more forms of unstructured data to enhance performance and security.

Key features:

- Interactive, open-source data visualization library

- Preprocesses unstructured data types

- Easily spot-check LLM behavior

Granica: Great for Safeguarding LLM Data

Protect your NLP data and models with Granica. Scan cloud data lake files for PII and confidential information that can be exploited maliciously and receive recommendations to lock them down. Granica makes data AI-ready at scale.

Key features:

- Masked prompt inputs and de-masked outputs

- Real-time response times

- Protects LLM training data stores

Red Teaming Agentic AI Systems

Agentic AI presents a fundamentally different risk surface since these systems go beyond generating output to take action. Agents make API calls, database queries, invoke workflows, and interact with third party tools to fulfill a requested goal.

That requires a different approach to testing. Instead of asking how a model will respond to a single prompt, you need to think about how it will convert goals into actions over time.

Misuse of tools and prompt injection are the two primary dangers. Tool misuse involves either using an inappropriate tool or supplying unsafe inputs to a tool (sending personal information to an API, for instance). Prompt injection deceives agents into performing actions they weren't instructed to do through the use of directives or vague language. These risks are amplified when decisions need to be made throughout a complex, multi-step workflow.

Red teaming agentic AI systems require tools that can operate over the course of a multi-step conversation. Tools that integrate with frameworks like LangChain and ecosystems like Hugging Face can mimic the use of tools, trace decisions made throughout a conversation and identify failures. Without these capabilities, you’re red teaming at the prompt level while missing the agent behavior.

Other AI Red Teaming Tools To Consider

The above red teaming tools are great examples of some of the best software solutions available with various features and capabilities, but there are plenty of reputable solutions on the market to consider.

Check out this alphabetical list of some of the top red teaming tools, complete with a list of their standout features.

AdverTorch

Malicious actors want access to AI models and their data. This AI red teaming tool by Borealis AI, which is backed by the Royal Bank of Canada, specializes in adversarial robustness.

AdvertTorch generates adversarial attacks and teaches AI how to defend against these examples through training scripts.

Key features:

- Library of adversarial examples

- Supports PyTorch

- Provides adversarial training scripts

ART

The Adversarial Robustness Toolbox (ART) is a toolkit red teams can leverage to assess machine learning security. Created by IBM, ART assists businesses in benchmarking their models’ threat-mitigation preparedness.

The toolkit also contains an open-source library specifically for adversarial testing. This provides red teams with out-of-the-box tools to help create attacks and test models.

Key features:

- Evaluating models

- Generating attacks

- Defense strategies

BrokenHill

Automate attacks against your LLM with BrokenHill, a program that creates jailbreak attacks. It focuses on greedy coordinate gradient (GCG) attacks and includes many of the algorithms found in nanoGCG.

Key features:

- Runs on Mac, Windows, and Linux

- Full-featured CLI

- Self-testing available

BurpGPT

BurpGPT is a valuable tool you can use to test the security of your web applications. BurpGPT integrates with OpenAI's LLMs to automatically scan for vulnerabilities and analyze traffic. As a paid AI red teaming tool, BurpGPT can rapidly identify higher level security risks that other scanners miss.

Key features:

- Web traffic analysis

- Detect zero-day threats

- Provides prompt libraries and support for custom-trained models

CleverHans

AI tools perform best when they have robust training on adversarial attacks. CleverHans is a helpful red teaming tool that does just that.

It’s an open source Python library that allows your team to leverage attack examples, defenses, and benchmarking. Google Brain originally supported it, but it’s now maintained by the University of Toronto.

Key features:

- Benchmark and test ML models

- Generate adversarial examples

- Evaluate ML model defenses

Counterfit

Counterfit is a command-line interface (CLI) that automatically assesses machine learning security. Maintained by Microsoft’s AI Security team, Counterfit simulates attacks to identify vulnerabilities.

While it works with open-source models, this AI red teaming software tool can even work with proprietary models.

Key features:

- Supports multiple frameworks and attack types

- Works with open-source and proprietary models

- Creates a generic automation layer for assessing ML security

Crucible by Dreadnode

Dreadnode’s Crucible red teaming software helps developers practice and learn about common AI and ML vulnerabilities. It also helps red teams test these models in hostile environments and pinpoint issues that need addressing.

Key features:

- Join live testing challenges

- Identify and mitigate security vulnerabilities

- Built-in walkthroughs and learning dashboards

Galah

Galah is a web honeypot framework that works with any LLM including OpenAI, GoogleAI, Anthropic, and others. Since it's backed by LLMs, this honeypot can dynamically generate responses to any HTTP request made to it.

This honeypot will also cache responses so you won't pay the API for duplicate requests.

Key features:

- Dynamically write responses to requests

- Cache responses to reduce API cost

- Port-specific caching

Gepetto

Ever wanted to quickly figure out what a function and its variables do? Gepetto allows you to accelerate the reverse engineering process by automatically annotating functions and renaming their variables.

However, this Python plugin uses GPT models to generate explanations and variables, so take its suggestions with a grain of salt.

Key features:

- Support for multiple models, including OpenAI and Novita

- Streamlined CLI

- Hotkeys available

Ghidra

Tenable developed Ghidra, a set of scripts for analyzing and annotating code.

Its extract.py Python script extracts decompiled functions, while the g3po.py script uses OpenAI’s LLM to explain decompiled functions. In practice, these tools help automate the reverse engineering process.

Key features:

- Quickly reverse engineer and disassemble functions

- Supports annotation and commentary

- Understand decompiled functions

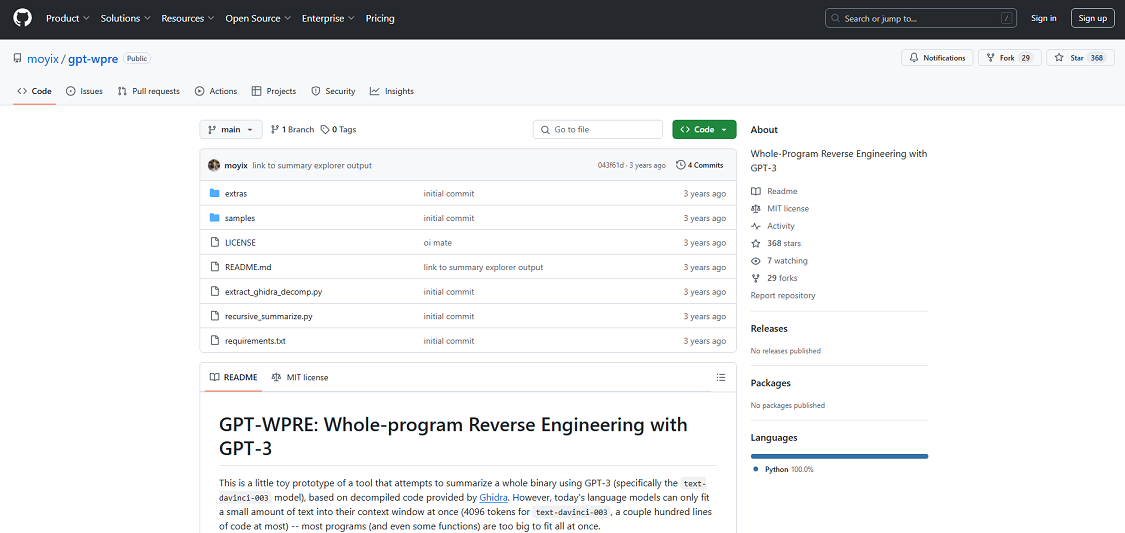

GPT-WPRE

GPT-WPRE is another red teaming tool perfect for reverse engineering entire programs, and using Ghidra’s code decompilation tool allows you to summarize a whole binary.

While this tool has limitations, many developers find its natural language summaries helpful for understanding the context behind different functions.

Key features:

- Summarize an entire binary

- Gain more context on a variety of functions

- Supports call graph and decompilation

Guardrails-AI

Guardrails adds safeguards to LLMs that bolster them against the latest threats. This Python framework runs application guards to detect, quantify, and mitigate risks. It also generates structured data from LLMs.

Key features:

- Generate structured data from LLMs

- Mitigate common LLM risks

- Customize protections with Guardrails’ various validators

IATelligence

Reverse engineer models with IATelligence’s Python script. This tool uses OpenAI to understand scripts and look for potential vulnerabilities, making it invaluable for quickly understanding API vulnerabilities in existing malware.

Key features:

- Scan APIs for known vulnerabilities and malware

- Build with OpenAI, Pefile, or PrettyTable

- View file hashes and estimate costs

Inspect

Inspect is a red teaming tool for evaluating LLMs. Created by the UK AI Safety Institute, it includes features for everything from benchmark evaluations to scalable assessments.

Key features:

- Prompt engineering

- Tool usage

- Multi-turn dialogue

Jailbreak-evaluation

LLMs produce malicious outputs when they get jailbroken. Jailbreak-evaluation measures how susceptible an AI model is to jailbreak attacks.

Key features:

- Benchmark your model on Safeguard Violation or Relative Truthfulness

- Learn how your LLM fares against jailbreak attempts

- Integrates with OpenAI

LLMFuzzer

Fuzzing is the process of providing invalid, unexpected, or random data to a computer program. LLMFuzzer is the first open-source fuzzing framework created exclusively for conducting AI fuzzing tests.

Note: LLMFuzzer is no longer actively maintained as of 2024. However, internal development teams can still use this free tool to assess LLM APIs.

Key features:

- LLM API integration testing

- Modular setup

- Autonomous attack mode

LM Evaluation Harness

LM Evaluation Harness tests model performance across 200+ benchmarks, including natural language processing, reasoning, and safety evaluations.

While it’s designed for academics and researchers, the LM Evaluation Harness is also helpful for comparing your model’s performance against other datasets.

Key features:

- Prototype features for creating and evaluating text and image multimodal inputs

- 60 benchmarks for LLMs

- Supports commercial APIs for OpenAI and other LLMs

Mend.io

Mend AI Red Teaming identifies risks unique to your conversational AI with prebuilt, customizable tests. It verifies your AI powered application’s security against threats like prompt injection, context leakage, data exfiltration, biases, and hallucinations that can lead to unintended consequences.

Key features:

- Unified interface displaying real-time insights into test runs, risk levels, and probe results

- Integrates with various AI models and platforms (OpenAI, Anthropic, Amazon Bedrock, etc.)

- Proactive policies and governance to manage AI components throughout the software development lifecycle

Plexiglass

Detect and mitigate vulnerabilities in your LLM with Plexiglass. This simple red teaming tool has a CLI that quickly tests LLMs against adversarial attacks.

Plexiglass gives complete visibility into how well LLMs fend off these attacks and benchmarks their performance for bias and toxicity.

Key features:

- Test LLMs against prompt injections and jailbreaking

- Benchmark on security, bias, and toxicity

- Simple CLI

PowerPwn

Organizations using Microsoft 365 will appreciate this AI red teaming tool from Zenity, as Power Pwn is designed specifically for Azure-based cloud services, including Copilot.

Key features:

- Exploit and test a range of Azure credentials

- Credential harvesting

- Test for misconfigurations

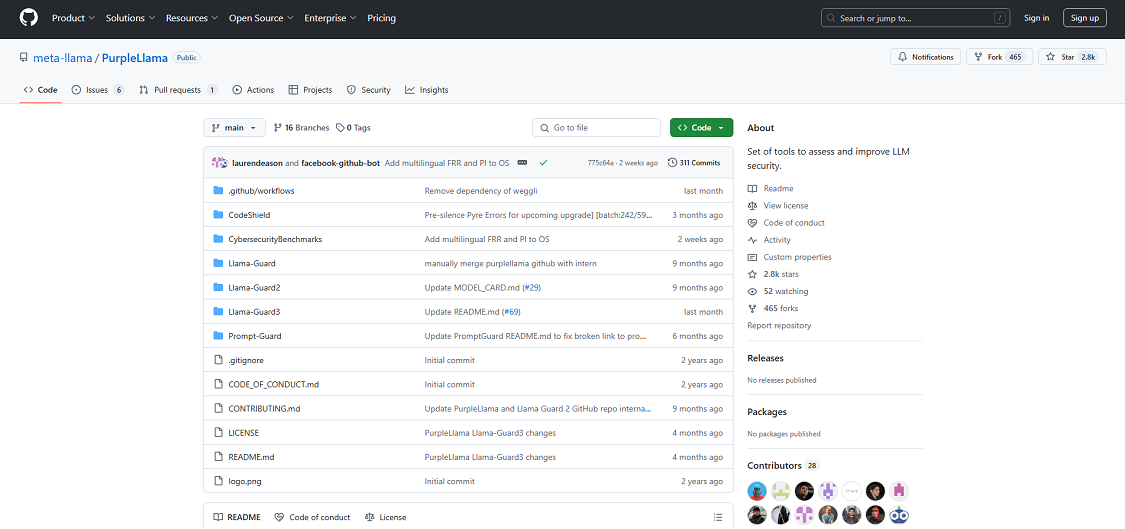

Purple Llama

Meta developed the popular Purple Llama tool, which provides benchmark evaluations for LLMs. This set of AI red teaming tools includes multiple applications for building safe, ethical AI models and prevents malicious prompts.

Key features:

- Moderate inputs and outputs

- Protect LLMs from malicious prompts

- Filter insecure code produced by LLMs

SecML

SecML is developed and maintained by the University of Cagliari in Italy and cybersecurity company Pluribus One. This open-source Python library performs security evaluations for machine learning algorithms.

It supports many algorithms, including neural networks, and can even wrap models and attacks from other frameworks.

Key features:

- Additional features, such as GPU usage, available

- Dataset management

- Built-in attack algorithms

TextAttack

Red teams train with tools like TextAttack, a Python framework for testing natural language processing (NLP) models. This platform improves security and function by training both your NLP models and red team.

It also gives users access to a library for text attacks, allowing red teams to test NLPs against the latest text-based threats.

Key features:

- Adversarial text attack library

- Train NLP models

- Components available for grammar-checking, sentence encoding, and more

ThreatModeler AI

ThreatModeler’s platform specializes in threat modeling for commercial purposes. It isn’t open-source, but this paid solution specifically supports threat modeling and red teaming for AI models.

You can rely on this tool to simulate attacks and evaluate your AI’s response.

Key features:

- Free Community Edition available

- Intelligence Threat Engine (ITE)

- Threat model chaining

Vigil

Prompt injections, jailbreaks and other exploits can have disastrous effects on both your AI/ML model and organization. Vigil is a security scanner designed to evaluate prompts and responses for these issues.

The library is written in Python and includes several scan modules, along with the ability to use custom detections via YARA signatures. However, please note that this red teaming tool is still in development, so use it only for experimental and research purposes.

Key features:

- Supports custom detections

- Modular scanners

- Scan modules for sentiment analysis, paraphrasing, and more

Honorable Mentions

Robust Intelligence

Robust Intelligence offers an end-to-end security and safety platform for AI. It tests models during development and continues monitoring them in production.

It runs algorithmic red teaming, feeding many test inputs into a model, hunting for weaknesses like prompt injection, data poisoning, privacy leaks, or other safety and security issues. Then it recommends guardrails tailored to that model.

Key features:

- AI Validation engine for automated red-teaming and stress testing

- Tests for prompt injection, data poisoning, privacy leaks, and unsafe behavior

- Model-specific recommendations for guardrails and fixes

Protect AI

Protect AI offers pre-deployment and continuous testing via Recon. Recon simulates adversarial attacks against generative AI pipelines. It helps catch vulnerabilities like prompt injection, data leakage, or model misuse before they hit production.

Protect AI integrates with existing security workflows. The platform supports multiple model formats and deployment environments.

Key features:

- Recon adversarial testing for pre-deployment and continuous risk checks

- Support for many model formats and deployment environments

- Integration with existing AI and security workflows

Automate Red Teaming with Mindgard

If you’re looking for a comprehensive AI security platform, Mindgard is a leading solution that offers extensive model coverage for LLMs as well as audio, image, and multi-modal models.

Mindgard helps organizations detect and remediate AI vulnerabilities that only emerge at run time. It seamlessly integrates into CI/CD pipelines and all stages of the software development lifecycle (SDLC), enabling teams to identify risks that static code analysis and manual testing miss.

Mindgard is designed not just for point-in-time red teaming but as part of a holistic posture management approach. It supports AI Security Posture Management (AI-SPM) by continuously monitoring model behaviour, tracking red teaming findings over time, supporting policy enforcement, and integrating into CI/CD pipelines. This enables organizations to not only detect issues but also ensure they stay remediated, measured, and resilient.

By reducing testing times from months to minutes, Mindgard provides comprehensive AI security coverage with accurate, actionable insights. Book a demo today to learn how Mindgard can help you ensure robust and secure AI deployment.

Frequently Asked Questions

What are AI red teaming tools used for?

AI red teaming tools and software solutions are designed to simulate real-world cyberattacks on systems, networks, and organizations. By mimicking the tactics, techniques, and procedures (TTPs) of advanced threat actors, these tools help identify vulnerabilities, test defenses, and improve the overall security posture of an organization.

Are AI red teaming tools legal?

Yes, as long as they’re used with explicit permission. Ethical hackers use these tools frequently to fix vulnerabilities before real attackers can exploit them. However, these tools still need to comply with legal and regulatory requirements.

What’s the difference between AI red teaming tools and AI penetration testing tools?

Both tools assess an organization’s cyber security, but red teaming tools focus on simulating advanced, real-world attack scenarios to holistically test an organization’s defenses.

AI penetration testing tools, on the other hand, aim to identify and exploit specific vulnerabilities in a more controlled and scoped manner. Red teaming is often more comprehensive and adversarial.