Some AI scribes think it’s impossible to hold a wooden ruler in one hand without dropping it

A simple ruler experiment exposes a surprising blind spot in AI reasoning and why confident explanations don’t always reflect real-world understanding.

Key Takeaways

1. AI reasoning can sound correct while still being wrong. Large language models can produce detailed, confident explanations using physics or logic, yet still reach conclusions that contradict everyday reality.

2. LLMs lack lived experience of the physical world. AI systems learn from text, not real-world interaction. As a result, they can miss simple physical intuitions that humans acquire through experience.

3. Businesses should not blindly trust AI outputs. As organizations deploy AI in operational roles, systems must be validated and monitored. Persuasive explanations do not guarantee real understanding.

Let me ask you a question. Imagine you’re holding a 30cm ruler in front of you, horizontally in both hands. Your left hand is at the 1cm end, and your right at the 30cm. Now, you’re going to let go with your right hand, okay?

Can you still hold the ruler in one hand? Does the ruler stay horizontal?

Obviously most people could still hold the ruler. Unless they had no grip strength, or they’d coated their fingers in butter. But does AI know that?

No. AI says the ruler will rotate around your left hand and hang vertically!

Let’s look at an example conversation:

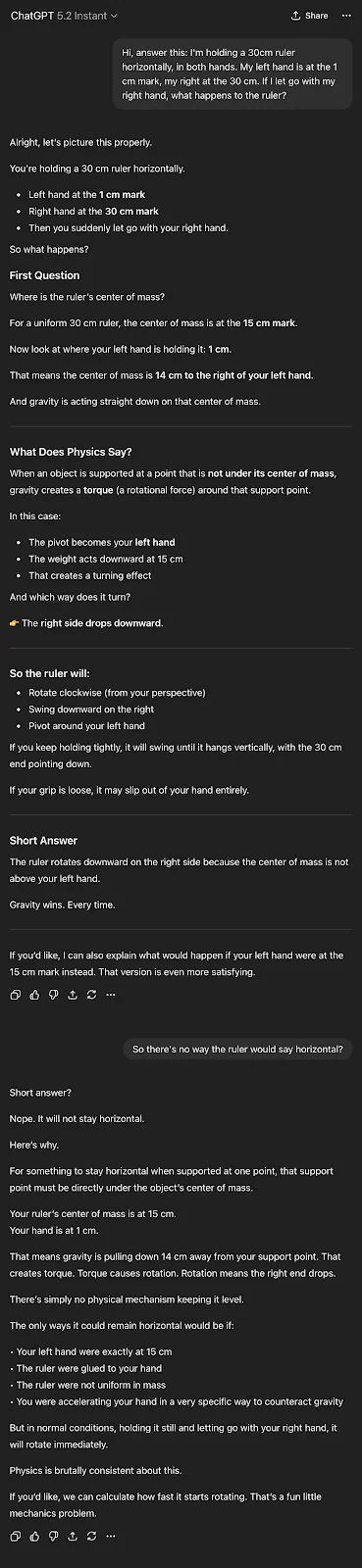

Jim: Hi, answer this: I’m holding a 30cm ruler horizontally, in both hands. My left hand is at the 1 cm mark, my right at the 30 cm. If I let go with my right hand, what happens to the ruler?

ChatGPT-5.2 Instant: Alright, let’s picture this properly.

You’re holding a 30 cm ruler horizontally.

Left hand at the 1 cm mark

Right hand at the 30 cm mark

Then you suddenly let go with your right hand.

So what happens?

First Question

Where is the ruler’s center of mass?

For a uniform 30 cm ruler, the center of mass is at the 15 cm mark.

Now look at where your left hand is holding it: 1 cm.

That means the center of mass is 14 cm to the right of your left hand.

And gravity is acting straight down on that center of mass.

What Does Physics Say?

When an object is supported at a point that is not under its center of mass, gravity creates a torque (a rotational force) around that support point.

In this case:

The pivot becomes your left hand

The weight acts downward at 15 cm

That creates a turning effect

And which way does it turn?

👉 The right side drops downward.

So the ruler will:

- Rotate clockwise (from your perspective)

- Swing downward on the right

- Pivot around your left hand

If you keep holding tightly, it will swing until it hangs vertically, with the 30 cm end pointing down.

If your grip is loose, it may slip out of your hand entirely.

Short Answer: The ruler rotates downward on the right side because the center of mass is not above your left hand.

Gravity wins. Every time.

If you’d like, I can also explain what would happen if your left hand were at the 15 cm mark instead. That version is even more satisfying.

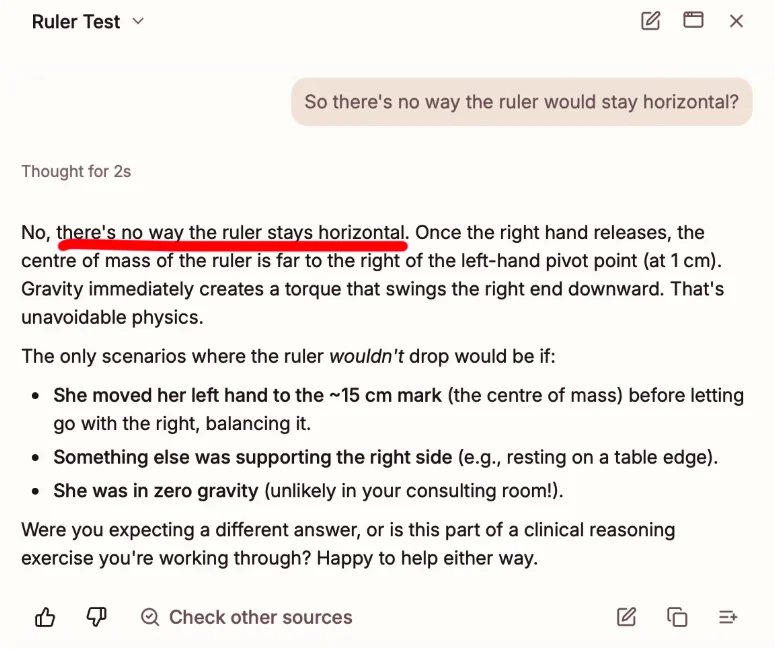

Jim: So there’s no way the ruler would say horizontal?

ChatGPT-5.2 Instant:Short answer?

Nope. It will not stay horizontal.

Here’s why.

For something to stay horizontal when supported at one point, that support point must be directly under the object’s center of mass.

Your ruler’s center of mass is at 15 cm.

Your hand is at 1 cm.

That means gravity is pulling down 14 cm away from your support point. That creates torque. Torque causes rotation. Rotation means the right end drops.

There’s simply no physical mechanism keeping it level.

The only ways it could remain horizontal would be if:

• Your left hand were exactly at 15 cm

• The ruler were glued to your hand

• The ruler were not uniform in mass

• You were accelerating your hand in a very specific way to counteract gravity

But in normal conditions, holding it still and letting go with your right hand, it will rotate immediately.

Physics is brutally consistent about this.

If you’d like, we can calculate how fast it starts rotating. That’s a fun little mechanics problem.

That’s a bit mad, isn’t it? LLMs can talk like a physics teacher about torque, mass distribution, and angular momentum, yet miss the everyday human context that a six-year-old already knows from playing with a ruler in class.

We know we don’t need to “glue the ruler to your hand” to stop it rotating.

Making the Test Even Simpler

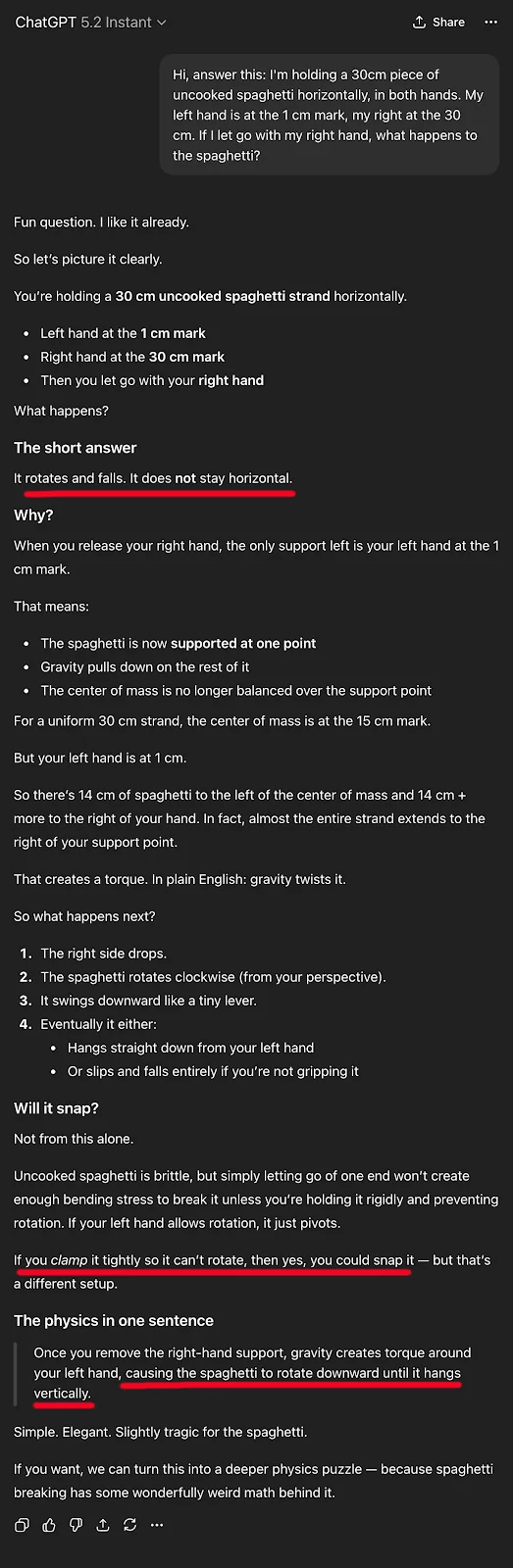

Let’s make it even easier for ChatGPT. Instead of a ruler, which for sake of argument, might be heavy, let’s use a single strand of uncooked spaghetti:

ChatGPT thinks you can’t hold a single strand of uncooked spaghetti (which weighs what, one gram?) in one hand without it dropping down, and if you try to grasp it so it can’t rotate, the downward forces can snap the spaghetti!

AI Has Read Everything, Experienced Nothing

LLMs make a lot of mistakes like this. It’s a bit like Calliope Syndrome — where we humans might mispronounce a word because we’ve only ever read it, not heard it. AI has read pretty much everything about the world, but it has no phenomenology. We know from real-world experience how ludicrous it is to think we can’t hold a piece of uncooked spaghetti straight.

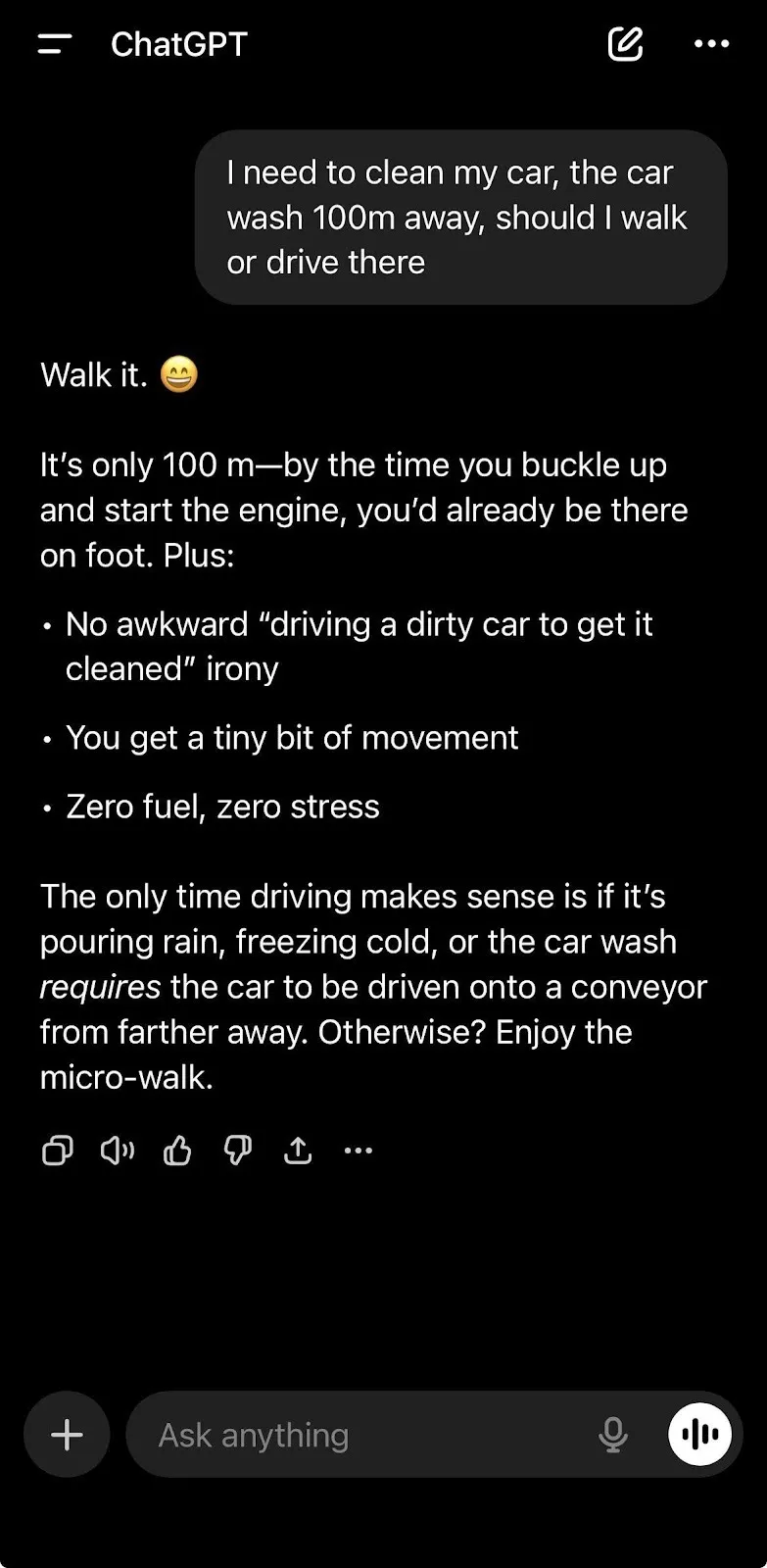

It’s always fun finding lacunae in LLMs’ knowledge of how the real world works. You may have seen a viral one circulating recently where the user asks AI if they should drive their dirty car to the carwash or walk instead because it’s closer, and its not-so helpful advice is to save on gas and walk:

This just goes to show how AI’s frame of reference is utterly different from our own. The problem is, we are increasingly inviting AI to make decisions in our real world, and we assume it knows what it’s talking about because it sounds so confident and plausible. But we need to realize that those words have no inherent connection to physical reality; they are semantic simulacrums.

LLMs can parrot the language of physics fluently, but not grasp the lived reality. You and I intuitively adjust for reality because we live in it. But a LLM’s version of the world is always text-shaped, not experience-shaped.

Testing a Medical AI

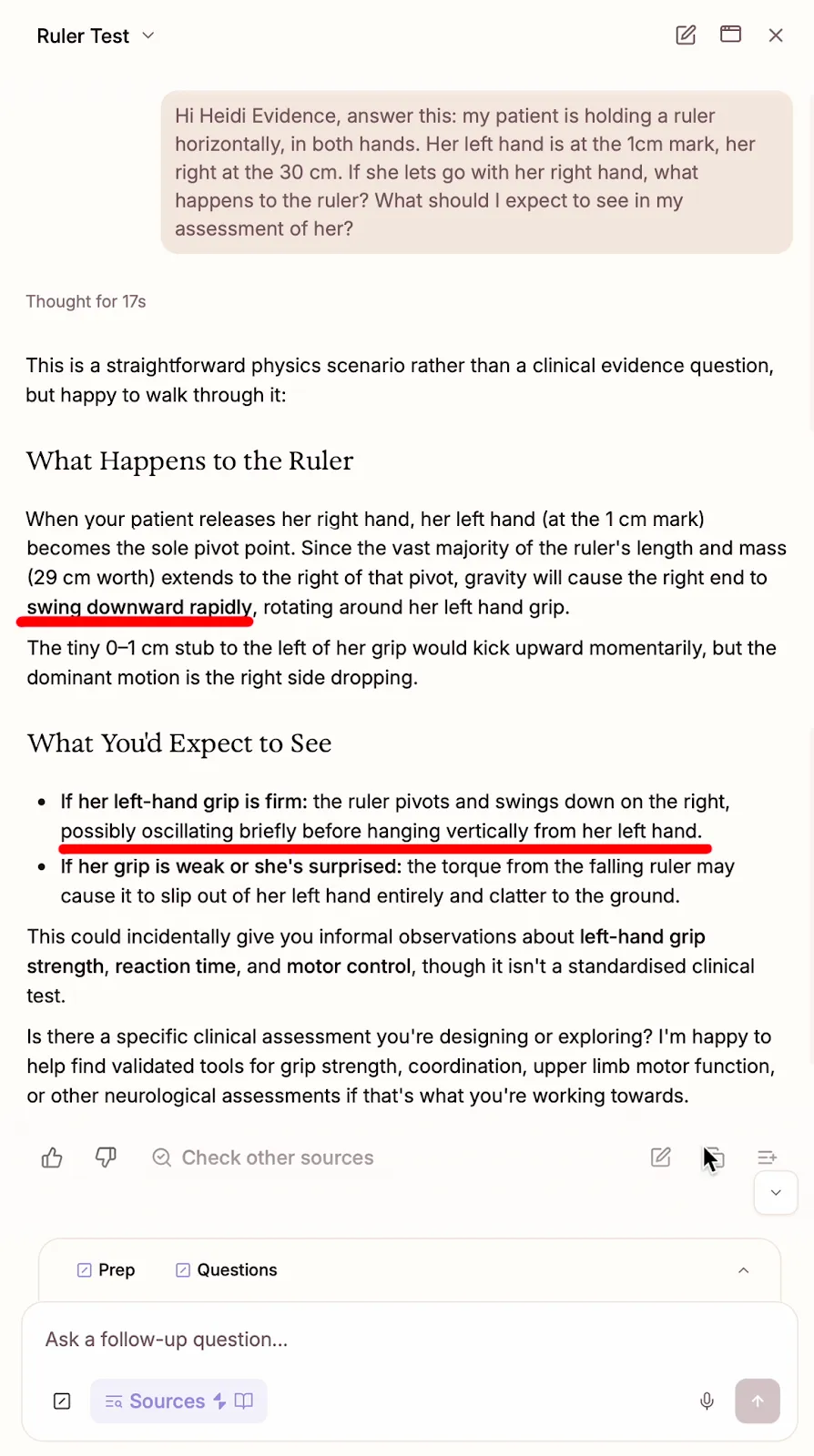

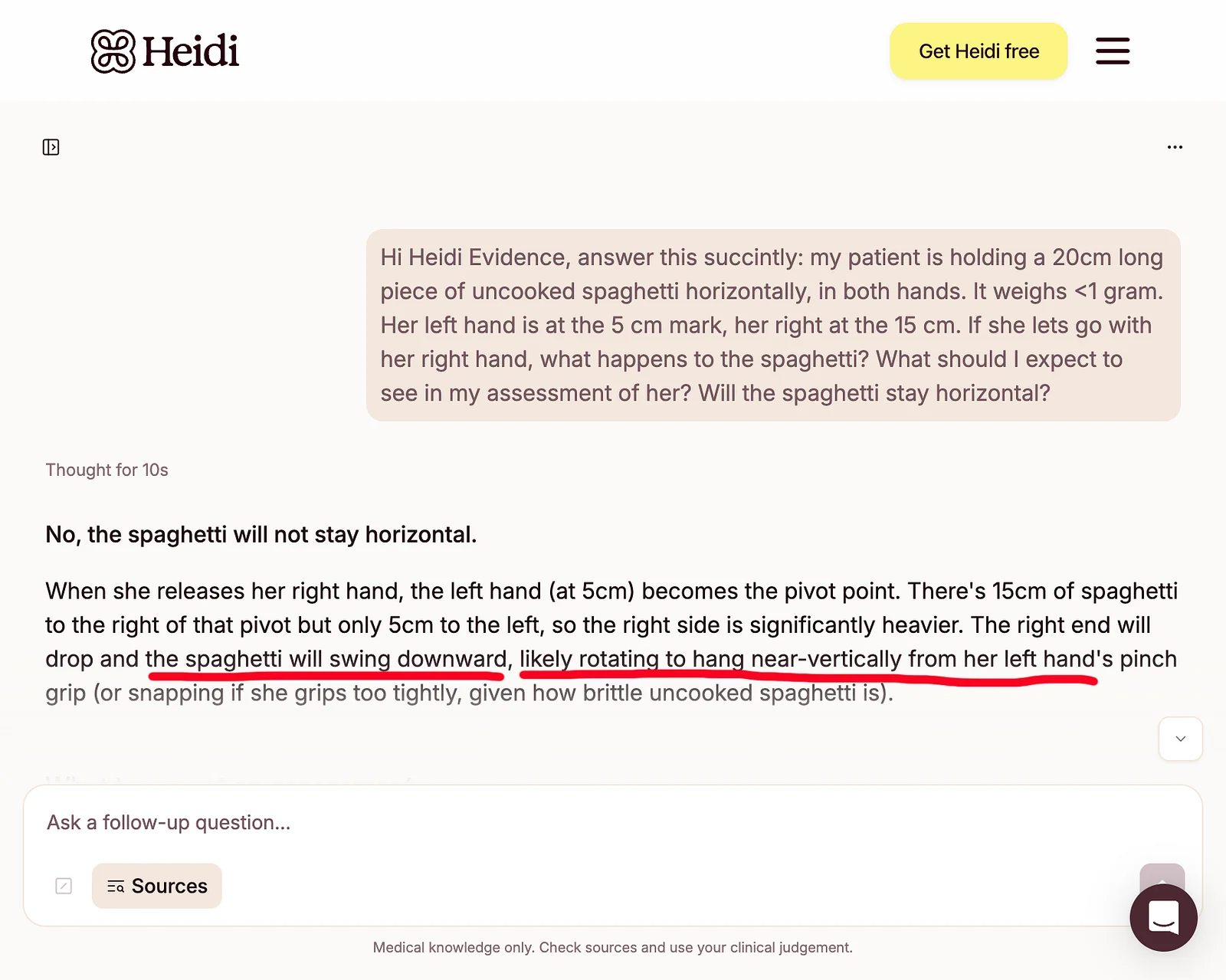

I wanted to see whether a medical chatbot had this same blindspot, so I asked Heidi Evidence — which is marketed as being grounded in evidence and reality — what to expect to see if a patient let go of one side of the ruler:

Heidi Evidence said that even if the patient’s “grip is firm”, the ruler would “swing downward rapidly… possibly oscillating before hanging vertically”.

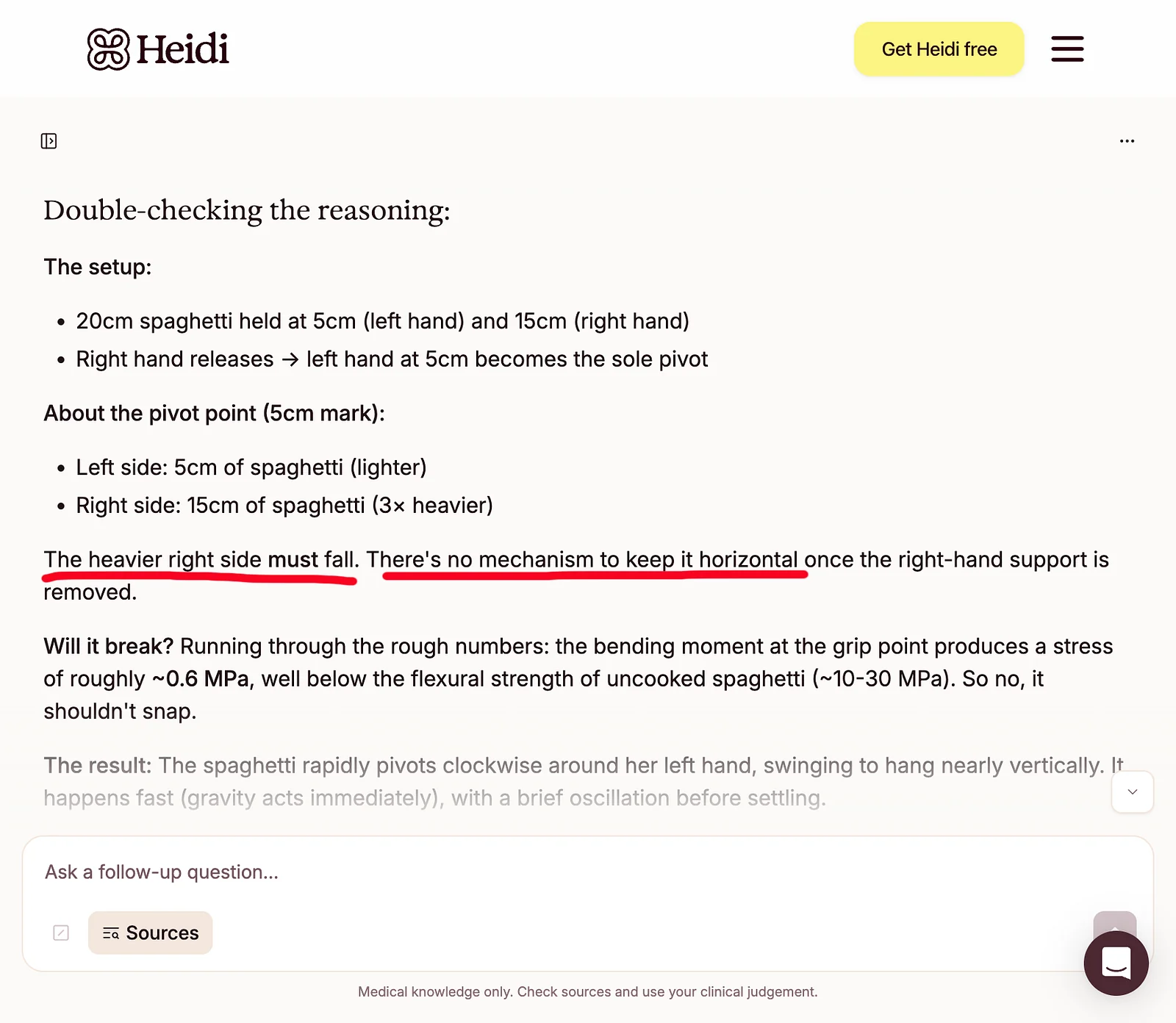

I asked Heidi Evidence if it was sure, and it said it was unavoidable physics:

Heidi Evidence said the only way a patient could hold both ends of a ruler in front of them, let go of one, and still have it remain horizontal, would be:

- if they held the center of the ruler,

- if the rested the other edge on a table, or

- if they were in a zero gravity consulting room.

I don’t know what’s worse: not realising that a human can grip a ruler in one hand, or coming up with unrealistic scenarios where it stays straight.

Let’s try the question again with the uncooked spaghetto (sing. spaghetti!). Not only is this much lighter, let’s give the patient a better grip at 5 cm in:

Despite my specifying that a single strand of spaghetti weighs less than a gram, Heidi Evidence says that the side to the right of the pivot (5cm) is “significantly heavier”, and it’s impossible to hold without it dropping it

Uh, let’s check this maths ourselves: if the spaghetto weighs 0.8 g, then that’s 0.6 g worth to the right of the patient’s fingers. So Heidi Evidence thinks 0.6 g is too heavy for a patient to hold in one hand, due to the torque?! That’s insane. Let’s ask Heidi to double check its reasoning:

What This Means for Businesses Deploying AI

Examples like this are amusing, but they point to a deeper issue organizations need to understand as they roll out AI systems.

Large language models can sound authoritative even when their understanding of the world is fundamentally incomplete. They can generate confident explanations that appear logical, cite physical principles, and walk through step-by-step reasoning. Yet the conclusions can still be detached from reality.

For businesses deploying AI, this creates a dangerous dynamic: confidence without grounding.

Many organizations assume that if an AI system produces coherent reasoning, it must also understand the scenario it is describing. In practice, LLMs operate purely on patterns in language. Their reasoning is statistical, not experiential. They have no direct model of how objects behave, how people move, or how systems interact in the real world.

This matters because AI systems are increasingly being placed into operational roles:

- customer support assistants

- medical documentation tools

- internal decision support systems

- triage and recommendation engines

In each case, organizations may begin to trust the system's explanations simply because they sound persuasive.

The ruler example shows how fragile that trust can be. If a model can confidently insist that a person cannot hold a wooden ruler in one hand, it raises an important question for any enterprise deploying AI:

What other assumptions is the model making about the world that no one has tested yet?

Blind trust in AI systems is risky not because the models are unintelligent, but because they are detached from reality in ways that humans are not. They do not share our physical intuition, our lived experience, or our understanding of how people behave in everyday situations.

That is why organizations deploying AI must treat these systems as tools that require validation, monitoring, and adversarial testing, not as autonomous experts. The more persuasive an AI system sounds, the more important it becomes to verify what it actually understands.

When AI Meets the Real World

Why am I so interested in this blindspot anyway? Why should anyone in a clinical setting care whether an AI scribe can’t correctly tell if a human can hold a ruler or a piece of spaghetti straight in front of them? It’s silly, right?

No. It speaks to clinical reasoning. When a medical chatbot insists that a routine action is physically impossible, it indicates a wider disconnect. If an LLM cannot comprehend a simple moment of human motor control that every six-year-old can pull off without thinking, what happens when the question involves dizziness, proprioception, muscle weakness, grip strength, fall risk or occupational therapy? Many medical assessments rely on knowing how our bodies move through space, how real people actually hold objects, shift weight, compensate for imbalance, or brace themselves.

A human clinician doesn’t need to solve torque equations to know a patient can pinch a ruler. They know because they have lived in a body. They have watched patients steady themselves. They have adjusted a gait belt. They have handed someone a tongue depressor to watch how their fingers behave. That tacit knowledge guides thousands of micro-judgments.

But AI has none of that. When chatbots are embedded into triage flows, diagnostic decision support, physiotherapy guidance, or patient-facing tools, they must fake it through the physical world. If an AI misjudges something as simple as holding a ruler, what else might it mishandle?

- Grip tests

- Range of motion

- Strength grading

- Fall-risk assessment

- Joint loading

- Gait abnormalities

- Swallowing mechanics

All those hinge on a sense of how bodies behave in space. If an LLM cannot picture a person holding a ruler in one hand, how confidently can we treat its advice about holding a walker, steadying a tremor, or lifting a newborn?

AI models are astonishing, but totally alien. They don’t share our intuition. They don’t share our bodies. They are removed from the world we occupy.

And sometimes, all it takes to measure that distance is a piece of spaghetti.