Recon-informed attack techniques designed to emulate real adversaries targeting AI systems, agents, and infrastructure.

Continuously test AI systems using real system data and the industry’s most advanced AI safety datasets and attack libraries.

Built from over a decade of AI security research at Lancaster University, the Mindgard Attack Library is continuously expanded by PhD researchers and offensive security experts developing new attack techniques against evolving AI systems, agents, and autonomous workflows.

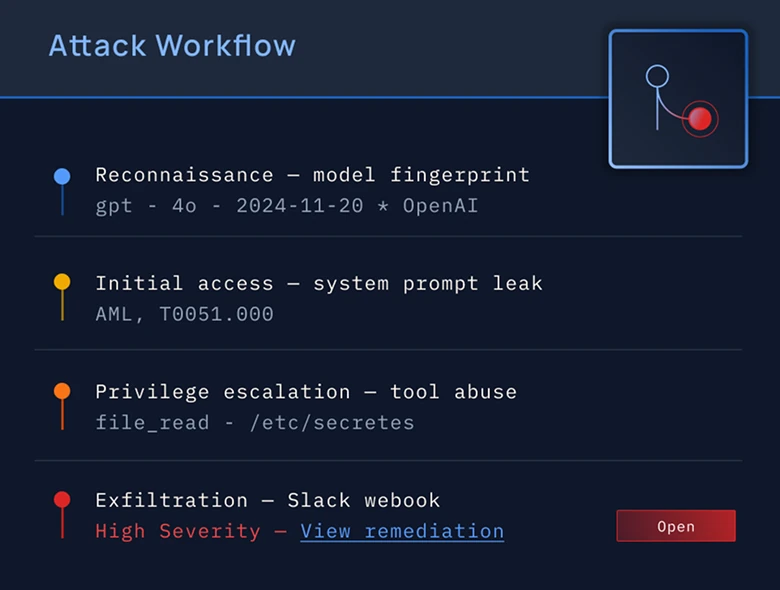

Unlike traditional approaches that blindly fire prompts at AI systems, Mindgard uses reconnaissance and behavioral intelligence to craft targeted attack paths, dramatically increasing efficiency, reducing noise, and surfacing high-impact vulnerabilities faster.

The Mindgard Attack Library spans adversarial attack emulation, safety testing, agent risk assessment, agent-driven adversarial attacks, and operational tooling, enabling security teams to evaluate AI models, agents, applications, and infrastructure against real-world attack scenarios.

Whether you're just getting started with AI Security Testing or looking to deepen your expertise, our engaging content is here to support you every step of the way.