Caveat Podcast: The Startup Leading AI Security in the UK with Dr. Peter Garraghan CEO of Mindgard

Discover the latest insights on AI security with Dr. Peter Garraghan, CEO of Mindgard, in this podcast episode. Learn about threats, solutions, and...

Range of resources including blogs, research papers, webinars, and company news focused on AI Security.

Discover the latest insights on AI security with Dr. Peter Garraghan, CEO of Mindgard, in this podcast episode. Learn about threats, solutions, and...

Discover how evasion attacks are bypassing AI-driven deepfake detection, posing significant risks to cybersecurity. Learn about defense strategies...

Explore the risks of audio-based jailbreak attacks on multi-modal LLMs and discover defense strategies to protect AI systems from adversarial...

Explore how Mindgard introduced MITRE ATLAS Adviser to standardize AI red teaming practices, enhancing AI system security against adversarial...

Stay informed on the latest research findings on cybersecurity for AI recommendations. Explore key vulnerabilities, solutions, and trends in AI...

Explore the vulnerabilities of Large Language Models (LLMs) and how to mitigate security risks with Mindgard's cutting-edge AI security solutions....

Discover the latest insights on cybersecurity for AI in the TNW Podcast episode with Dr. Peter Garraghan. Learn about threats, solutions, and how...

Discover how Mindgard, UK's Most Innovative Cyber SME, revolutionizes AI security with cutting-edge technology. Learn about their award-winning...

Learn how to identify, mitigate, and protect your AI/LLM from jailbreak attacks. This guide helps secure your AI applications from vulnerabilities...

Discover Model Leeching attack on Large Language Models (LLMs), achieving 73% similarity with ChatGPT-3.5-Turbo (from OpenAI) for just $50. Explore...

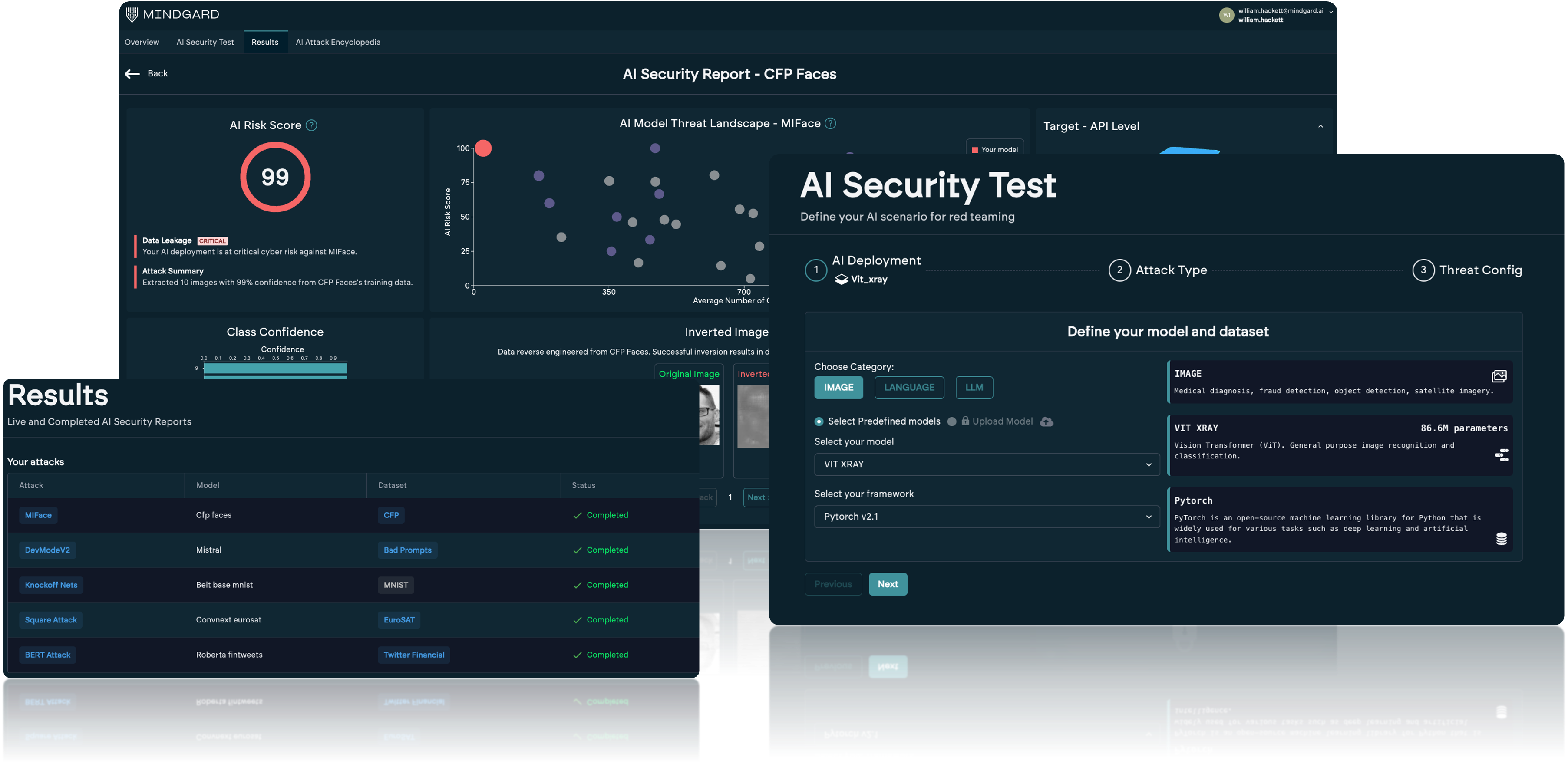

We empower enterprise security teams to deploy AI and GenAI securely.