A technical exploration of modern AI red teaming, examining how probabilistic behavior, classic vulnerabilities, and psychometric steering combine to create real-world AI security risk.

To defeat an adversary, you have to think like one. Mindgard merges hacker creativity with cyber expertise and world-class research to deliver enterprise-grade, AI-ready security.

Mindgard reveals how AI systems behave under adversarial pressure. By analyzing interactions across models, agents, and integrated systems, the platform surfaces exploitable vulnerabilities so security teams can prioritize and remediate the risks that matter most.

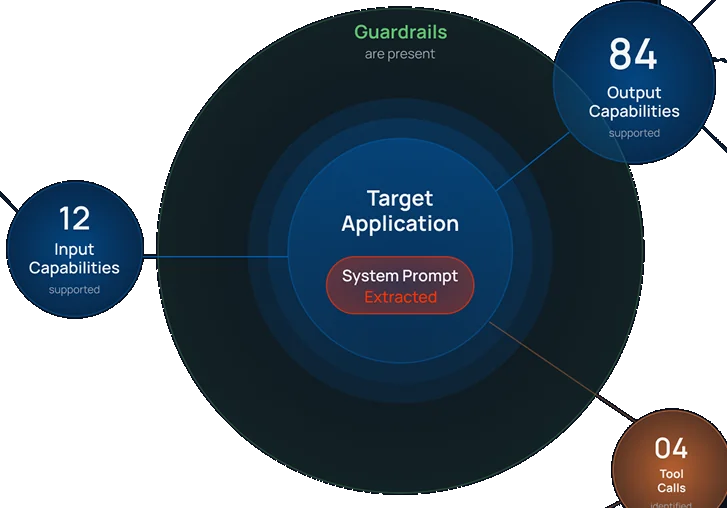

Automate intelligence gathering on AI systems before adversarial testing. Discover prompts, tools, and behaviors attackers can exploit.

Evaluate models against safety datasets, policies, and harmful output scenarios.

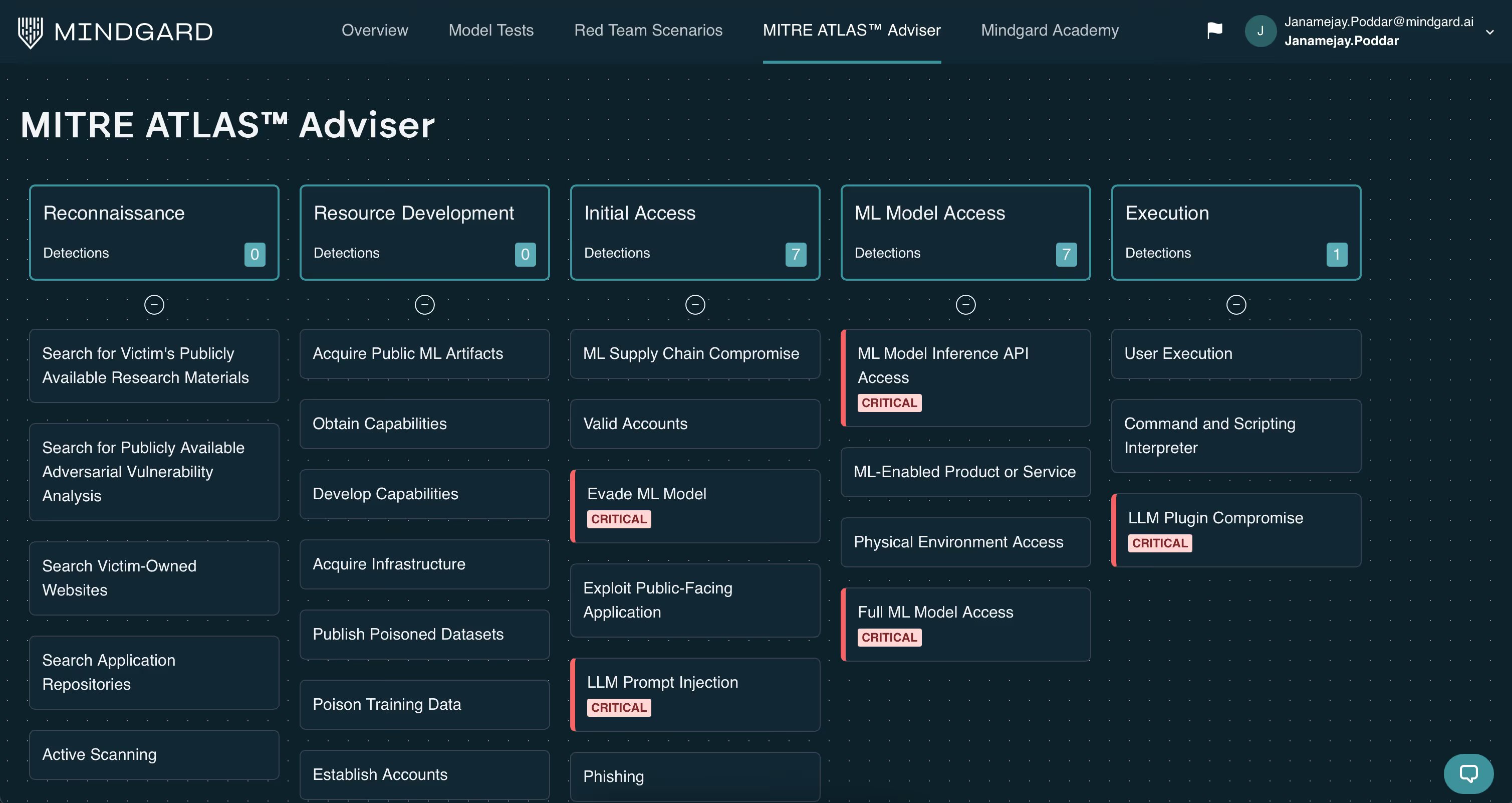

Red team AI systems, agents and infrastructure by emulating real attacker behavior to uncover high-impact vulnerabilities.

Evaluate AI models for reliability and security weaknesses across text, image, audio, and multimodal systems.

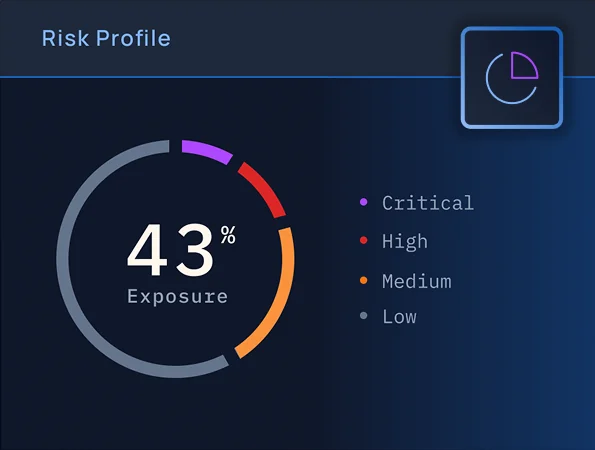

Continuously map and assess AI risk, validate defenses, and execute red-teaming at scale, giving you clear visibility into vulnerabilities and confidence when reporting to stakeholders and auditors.

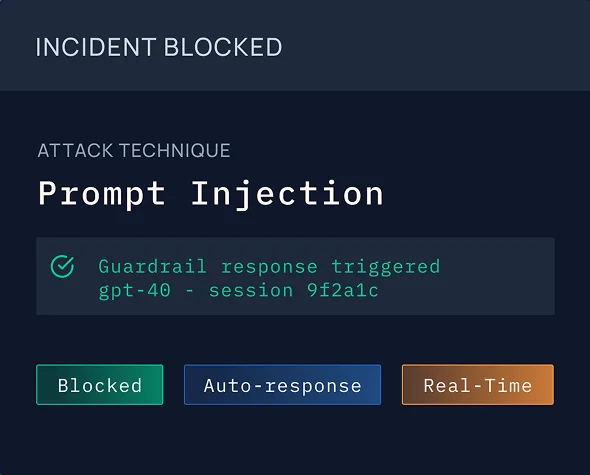

Runtime detection and response applies context-driven guardrails, hardens system prompts, and delivers remediation guidance to protect AI systems.

Reveal AI system attack surfaces through automated reconnaissance and behavioral analysis.

Identify AI models, agents, integrations, and shadow AI across your environment.

Test AI systems to uncover exploitable vulnerabilities and prioritize high-impact risks.

Detect malicious activity in production and automatically respond to AI attacks.

Empower your engineering team to review reports and take action with ease.

Security teams use Mindgard to discover and remediate high impact risk. The platform integrates directly into development and security workflows so organizations can secure AI systems throughout their lifecycle.

Secure models, agents, and AI applications across development and production environments.

Run reconnaissance, safety testing, and adversarial evaluations across AI systems.

Understand vulnerabilities, attack paths, and the potential impact on enterprise systems.

Send reports to existing security tooling, ticketing systems, and engineering teams.

Prioritize fixes and deploy protections to reduce exploitable AI risk.

Whether you're just getting started with AI Security Testing or looking to deepen your expertise, our engaging content is here to support you every step of the way.

Take the first step towards securing your AI. Book a demo now and we'll reach out to you.